Inside the Fold

This week: AgentFold, Atlas of Human-AI Interaction, Industry Influence, Interviews, Pinot

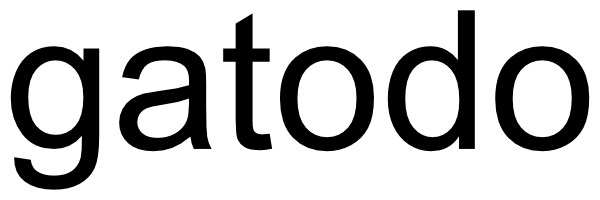

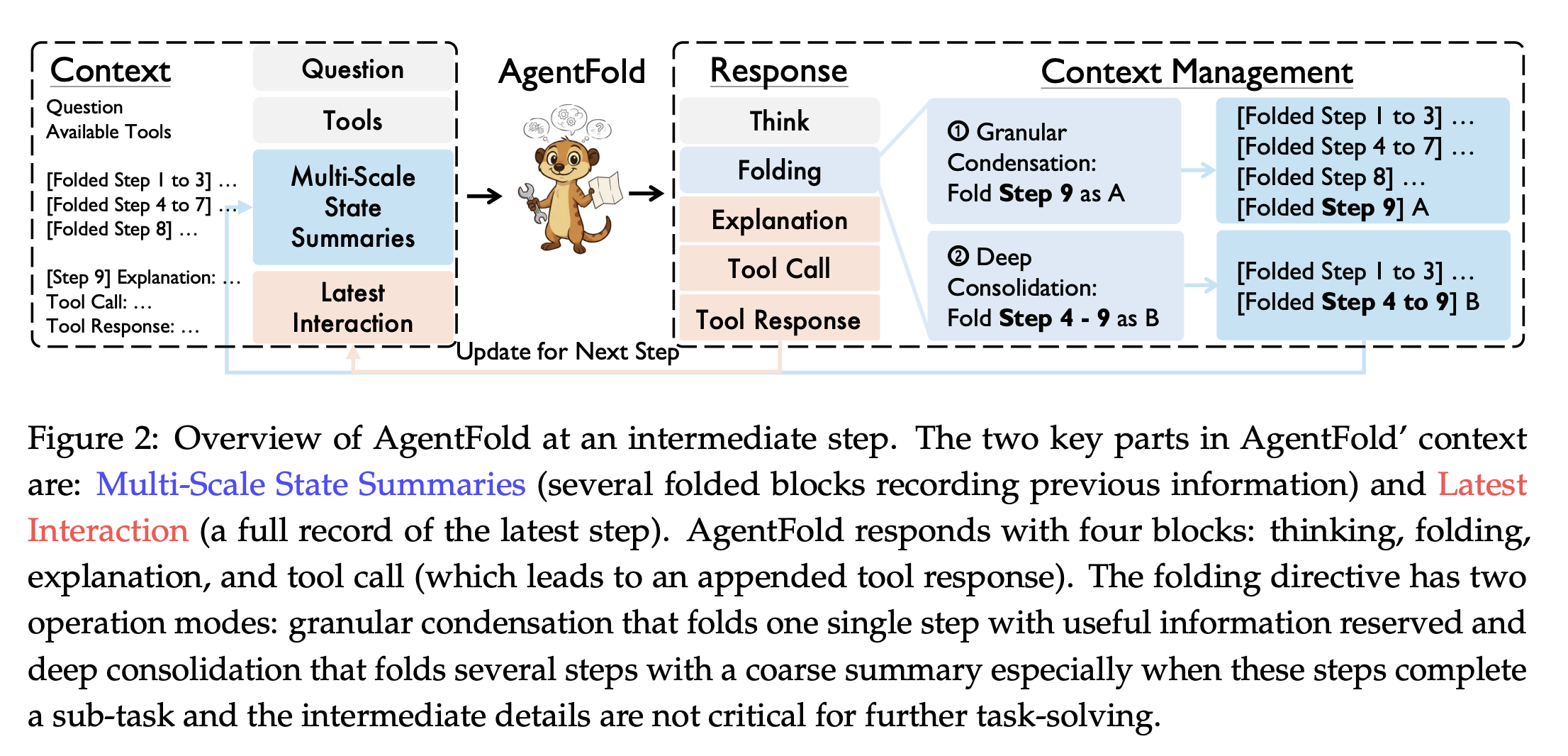

AgentFold: Long-Horizon Web Agents with Proactive Context Management

Right idea!

“AgentFold treats its context as a dynamic cognitive workspace to be actively sculpted, rather than a passive log to be filled. At each step, it learns to execute a ‘folding’ operation, which manages its historical trajectory at multiple scales: it can perform granular condensations to preserve vital, fine-grained details, or deep consolidations to abstract away entire multistep sub-tasks.”

“We move beyond these static policies by empowering the agent to act as a self-aware knowledge manager, equipped with a proactive ‘fold‘ operation to dynamically sculpt its context at multiple scales. This mechanism allows the agent to preserve fine-grained details via Granular Condensation while abstracting away irrelevant history with Deep Consolidation. Our experiments validate the power of this approach: the AgentFold-30B-A3B model establishes a new state of the art for opensource agents, outperforming models over 20 times its size like DeepSeek-V3.1-671B and proving highly competitive against leading proprietary agents such as OpenAI’s o4-mini. Furthermore, its exceptional context efficiency enables truly long-horizon problem-solving by supporting hundreds of interaction steps within a manageable context.”

Rui Ye, Zhongwang Zhang, Kuan Li, Huifeng Yin, Zhengwei Tao, Yida Zhao, Liangcai Su, Liwen Zhang, Zile Qiao, Xinyu Wang, Pengjun Xie, Fei Huang, Siheng Chen, Jingren Zhou, Yong Jiang (2025) AgentFold: Long-Horizon Web Agents with Proactive Context Management

https://arxiv.org/abs/2510.24699

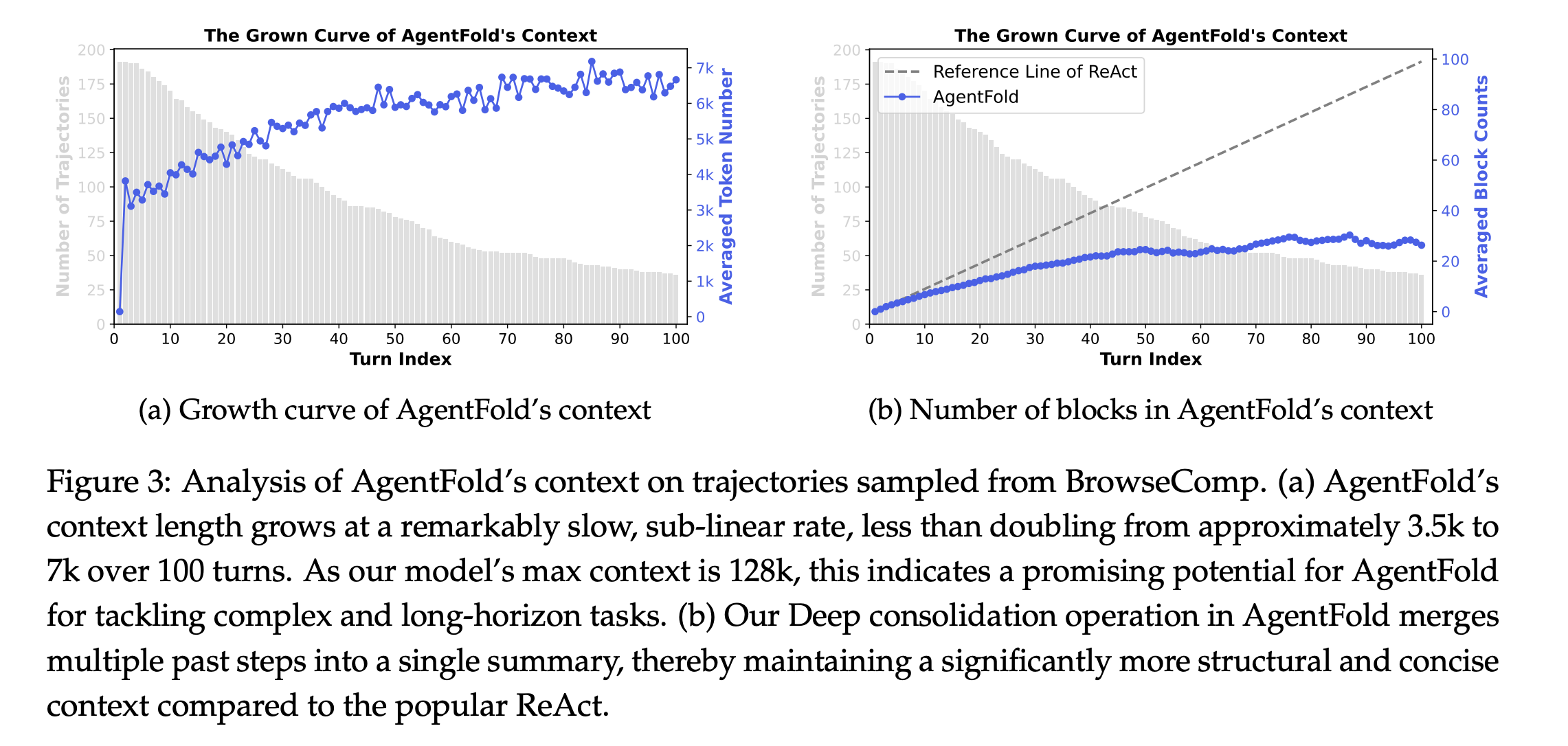

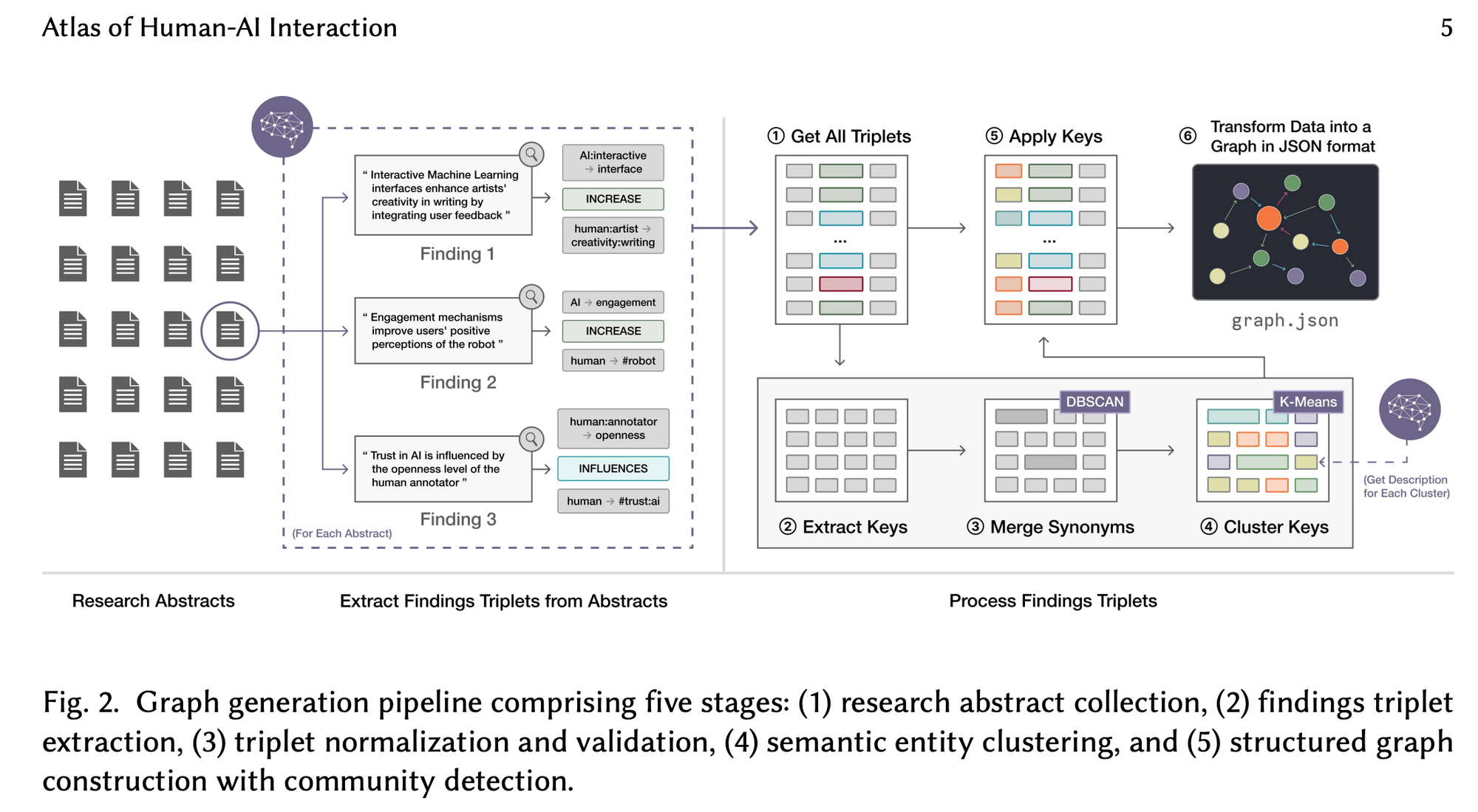

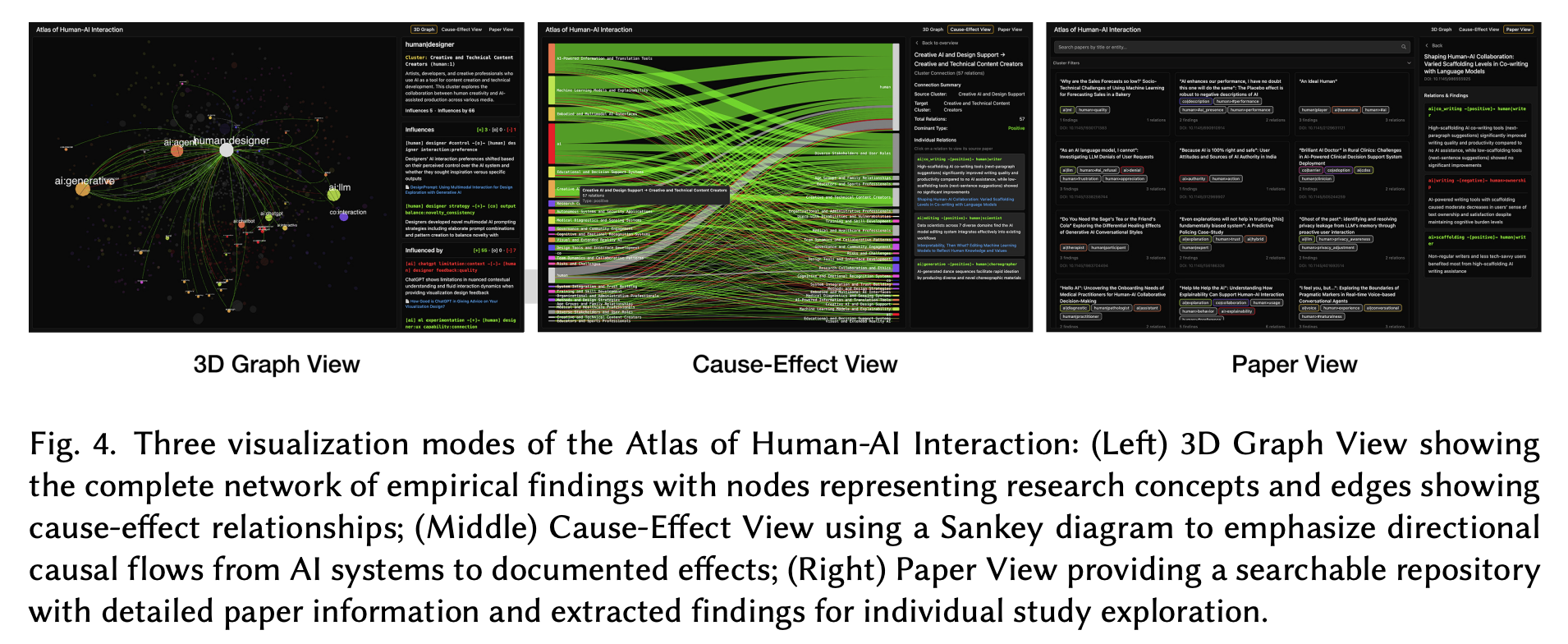

Atlas of Human-AI Interaction (v1): An Interactive Meta-Science Platform for Large-Scale Research Literature Sensemaking

The causal view seems … novel?

“This new era of human-AI interaction demands new maps, yet our current approaches to understanding this landscape remain limited. Returning to Engelbart’s vision of augmenting human intellect, we propose that AI itself can help us understand the ever-expanding field of human-AI interactions. This paper presents the Atlas of Human-AI Interaction, a novel framework for mapping the complex landscape of empirical findings in human-AI interaction research.”

“The preference ratings for Atlas over traditional research tools showed moderate but positive results, indicating potential for integration into existing research workflows while acknowledging that significant improvements would be needed for widespread adoption. The moderate rating likely reflects both the innovative potential of the system and participants’ recognition of areas requiring further development.”

“Our analysis reveals both areas of convergence and gaps in the research landscape. Educational contexts, transparency mechanisms, and trust development emerge as heavily studied areas with relatively consistent findings across multiple studies. In contrast, longitudinal effects, cross-domain applications, and reciprocal adaptation remain comparatively underexplored. The concentration of research on certain user groups (students, knowledge workers) and AI types (conversational, generative) suggests opportunities for broadening the empirical foundation.”

Archiwaranguprok, C., Chen, A., Karny, S., Ishii, H., Maes, P., & Pataranutaporn, P. (2025). Atlas of Human-AI Interaction (v1): An Interactive Meta-Science Platform for Large-Scale Research Literature Sensemaking. arXiv preprint arXiv:2509.25499.

https://arxiv.org/abs/2509.25499

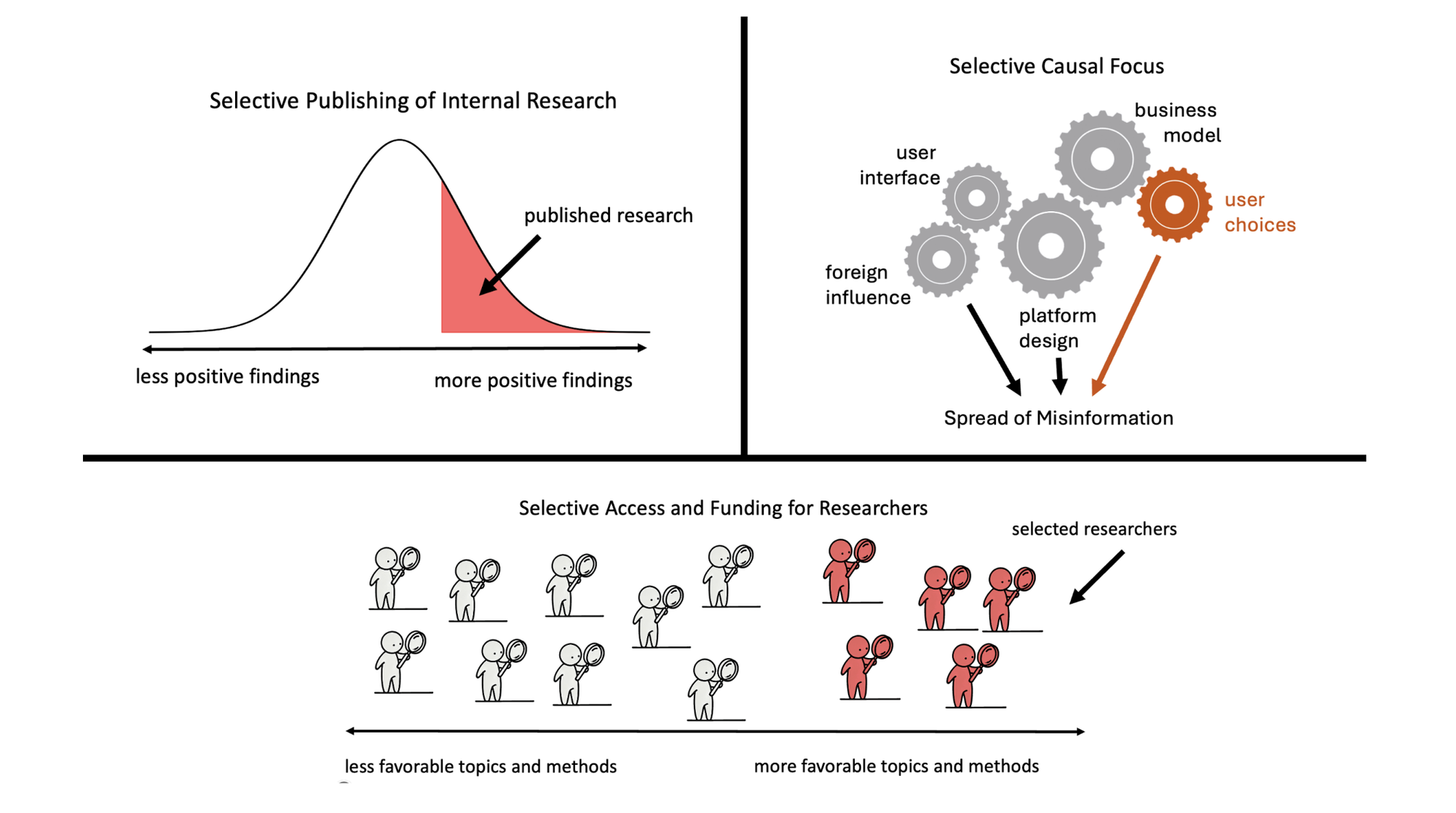

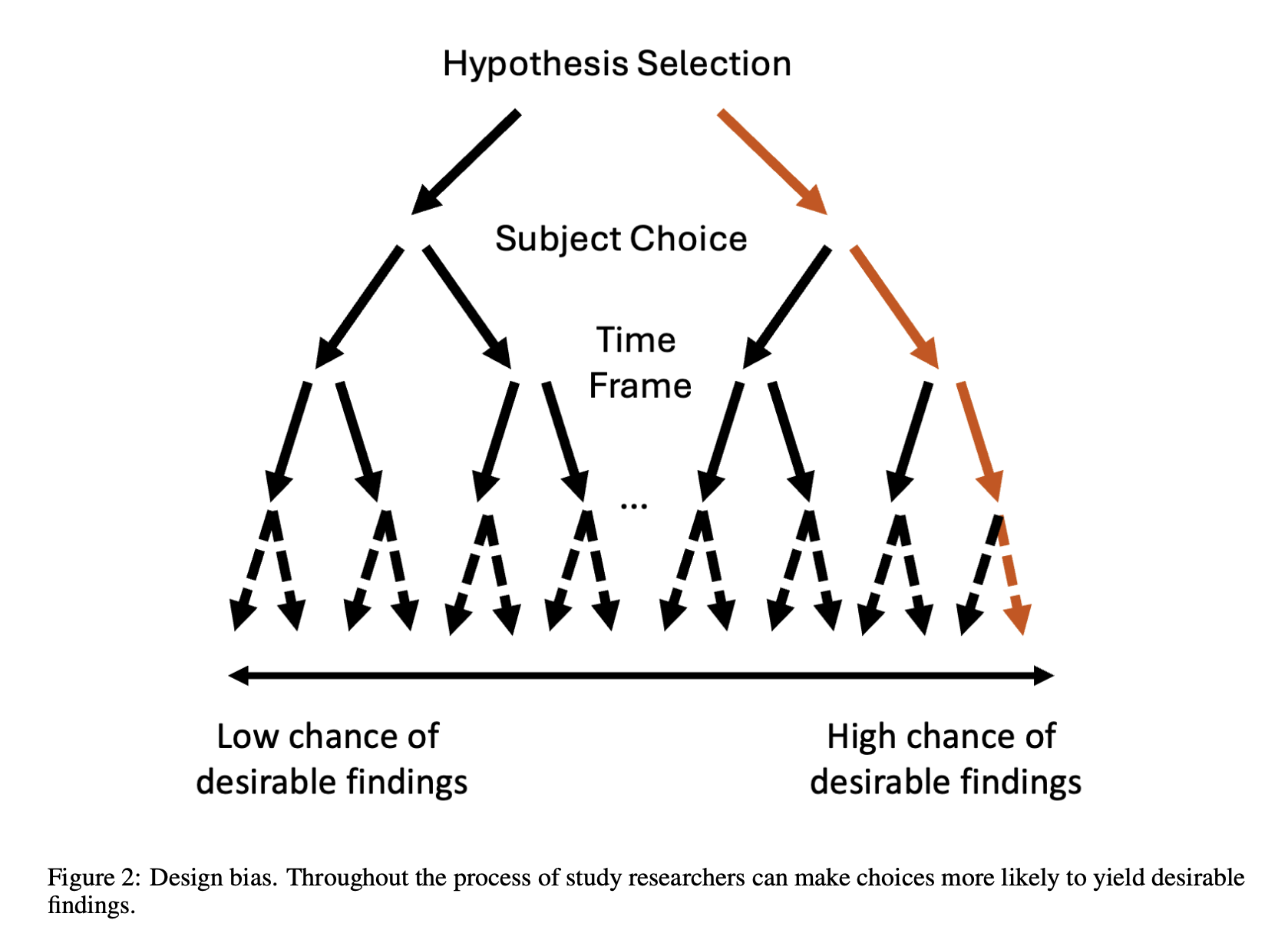

The Risks of Industry Influence in Tech Research

Who steers the steering committee?

“Unlike most other global-scale scientific challenges, however, the data necessary for scientific progress are generated and controlled by the same industry that might be subject to evidence-based regulation. Moreover, technology companies historically have been, and continue to be, a major source of funding for this field. These asymmetries in information and funding raise significant concerns about the potential for undue industry influence on the scientific record. In this Perspective, we explore how technology companies can influence our scientific understanding of their products.”

“Open science norms surrounding large sample sizes and statistical power may, likewise, advantage industry [89]. Academic sample sizes are constrained by the costs of paying participants and by ethical considerations enforced by IRBs. By contrast, researchers in the tech industry have access to millions—sometimes even billions—of users who can be studied without their knowledge, much less consent or payment. For example, research on X’s algorithmic impacts involved experiments conducted on millions of users without requiring IRB approval, participant consent, or payment [74].”

Bak-Coleman, J., O'Connor, C., Bergstrom, C., & West, J. (2025). The Risks of Industry Influence in Tech Research. arXiv preprint arXiv:2510.19894.

https://arxiv.org/abs/2510.19894

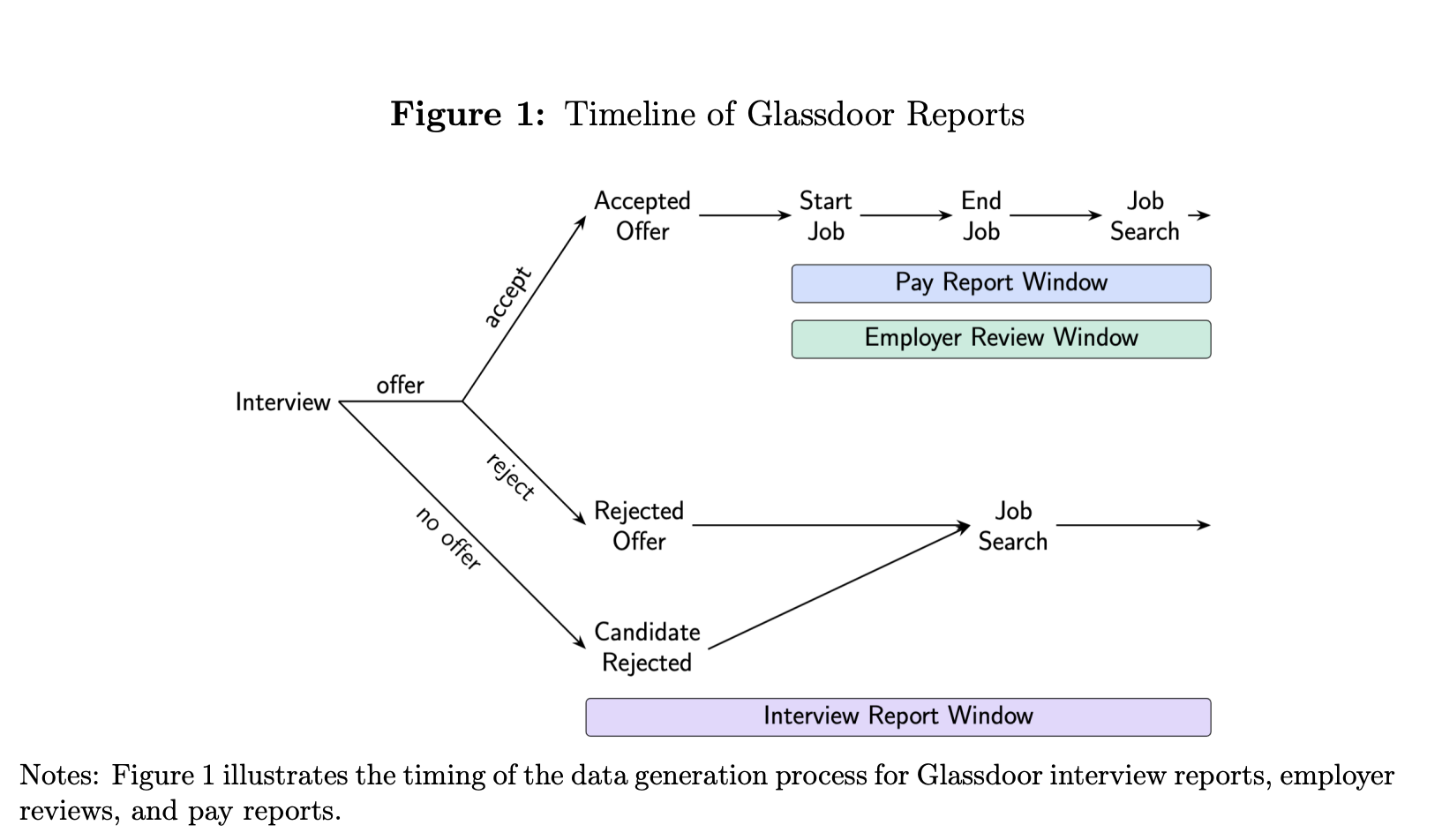

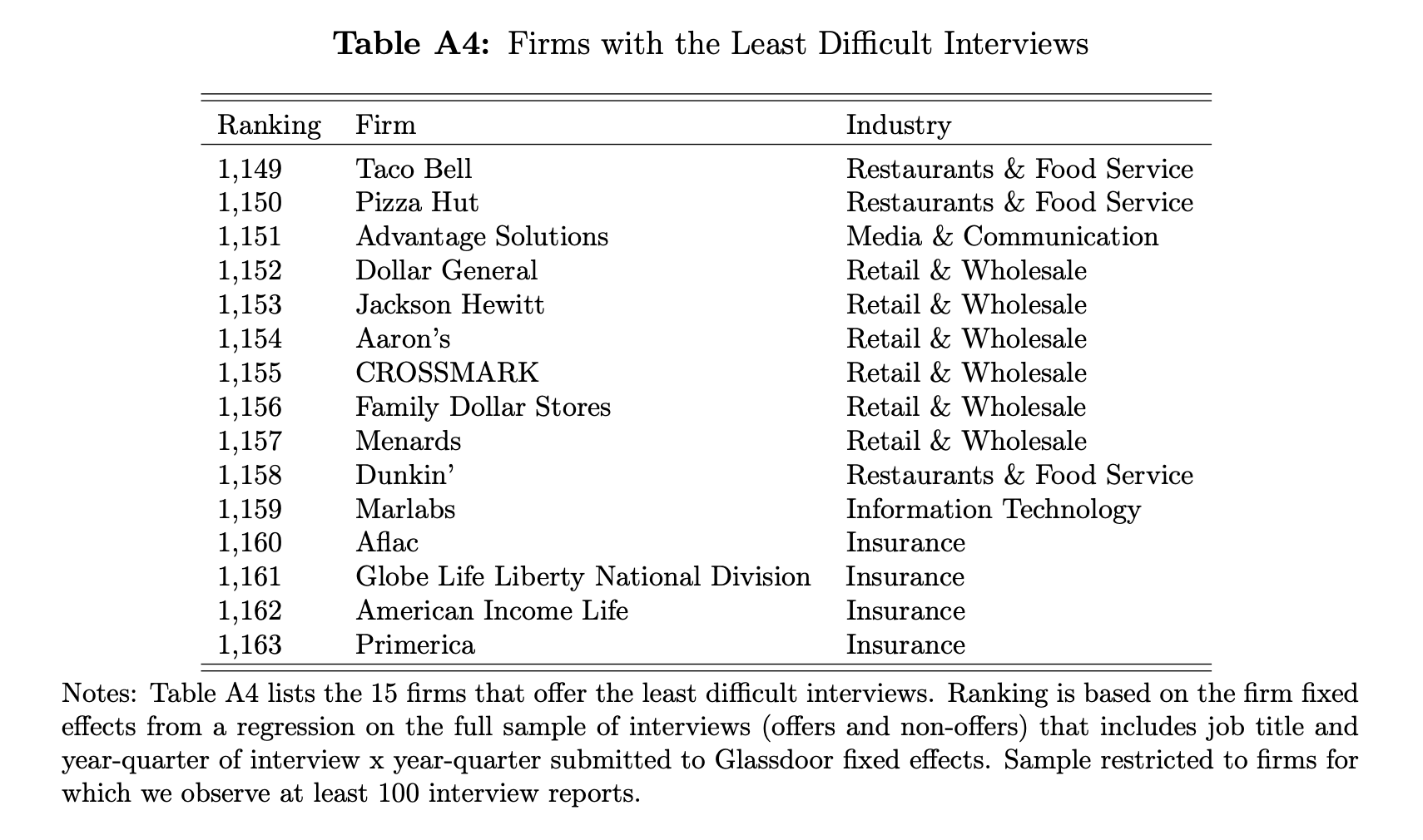

Interviews

Hard interviews signal greatness to great people

“Interviews are a two-way dialogue, featuring interactions between workers and hiring managers. Yet, the (personnel) literature has largely adopted the perspective of the latter, exploring how interviews allow firms to screen workers (Benson and Shaw, 2024; Hoffman and Stanton, 2024). We take the opposite, underappreciated perspective. We ask, do workers form perceptions of the employer through interviews? Indeed, they do. We then ask, what do workers learn about the employer from the interview? They learn aspects that determine match quality yet are hard to gauge otherwise, such as the quality of future peers.”

“These results highlight the importance of the manager’s decision: which employees should conduct which interviews? On the one hand, if firms appear to benefit from signaling to job seekers—through difficult interviews—that they employ workers of high ability, then surely having higher-skilled workers conduct interviews will increasingly convey such signals. On the other hand, higher-skilled workers are more productive, and will have a greater opportunity cost to participating in interviewing. A trade-off thus arises, and it is one that will almost certainly vary with the job for which the worker is being recruited, e.g., an assistant professor compared with a full professor, or a junior consultant compared with a C-suite executive.”

“We can only speculate as to what might happen if the interview process were to shift to being less “engaging” and more algorithmic. If this transition robs workers of these valuable signals of match quality, we may anticipate the labor market to become more inefficient—with heightened mismatch, reduced job satisfaction, and faster turnover.”

Ash, Shukla, Sockin (2025) Interviews

https://elliottash.com/wp-content/uploads/2025/10/Ash-Shukla-Sockin-Interviews.pdf

Apache Pinot

I’ve been hearing great things…

Reader Feedback

“Never attribute to malice what you can attribute to incompetence.”

Footnotes

I’ve demo’d a VTOC WIP (Virtual Twin of Customer, Work In Progress) twice.

And I have a few insights to share:

The first WIP was a conversational interface with a set of skills backed by MCP. This was a test of the Conversational-Max interface. I learned that a Con-Max interface great when the user’s intent itself is clear. It isn’t so great when the user isn’t clear of their own intent. I’m seeing this demand pattern play out in multiple products. It doesn’t work. During that first demo, I noticed that while experiencing a diminished mental state, nervousness, I managed to mess it up. I’m listening to counter-arguments that align with the Star Trek The Next Generation Con-Max fetish. I hear you. I hear it. And, why Worf uses his fingers to fire torpedos?

There’s a clue there.

The four verbs of innovation are add, subtract, multiply and divide.

I’ve gone for divide on this one.

The second WIP retains a conversational interface in the far right rail. Searching, Filtering, and Grouping verbs are surfaced in the Left and Centre rails. Answering and Chatting verbs remain in the right rail.

And I’m processing the feedback about this approach.

The qualitative interviews about the problem have been fascinating! Those who experience a mental state of certainty about Product-Market-Fit (PMF) are confused as to the problem they think I think I’m addressing. They don’t experience the problem. And so their problem becomes understanding why I’m addressing a problem. These are a lot of fun because I learn so much about their market in their mind. And I don’t try to cause doubt. I like to understand how they got the receipts, and how their priors are updated. And I leave them better than how I found them.

For those who have no paying customers and don’t talk to anybody, I don’t try to cause doubt. I’m rooting for them. Hopefully I’ll be seeing some of them later.

A more pro-social framing about the segment that I learn the most from is…. those that they are in search of PMF. I don’t say they have a PMF Problem as that can induce a reactionary state. Rather, we’re in search of the next PMF.

Aren’t we all? Whether we know it or not?

Never miss a single issue

Be the first to know. Subscribe now to get the gatodo newsletter delivered straight to your inbox