Learning by Losing Signal

This week: Lost in backpropagation, temporal narrative atoms, exponential misalignment, brain-like-agi, energy based models, absurdity

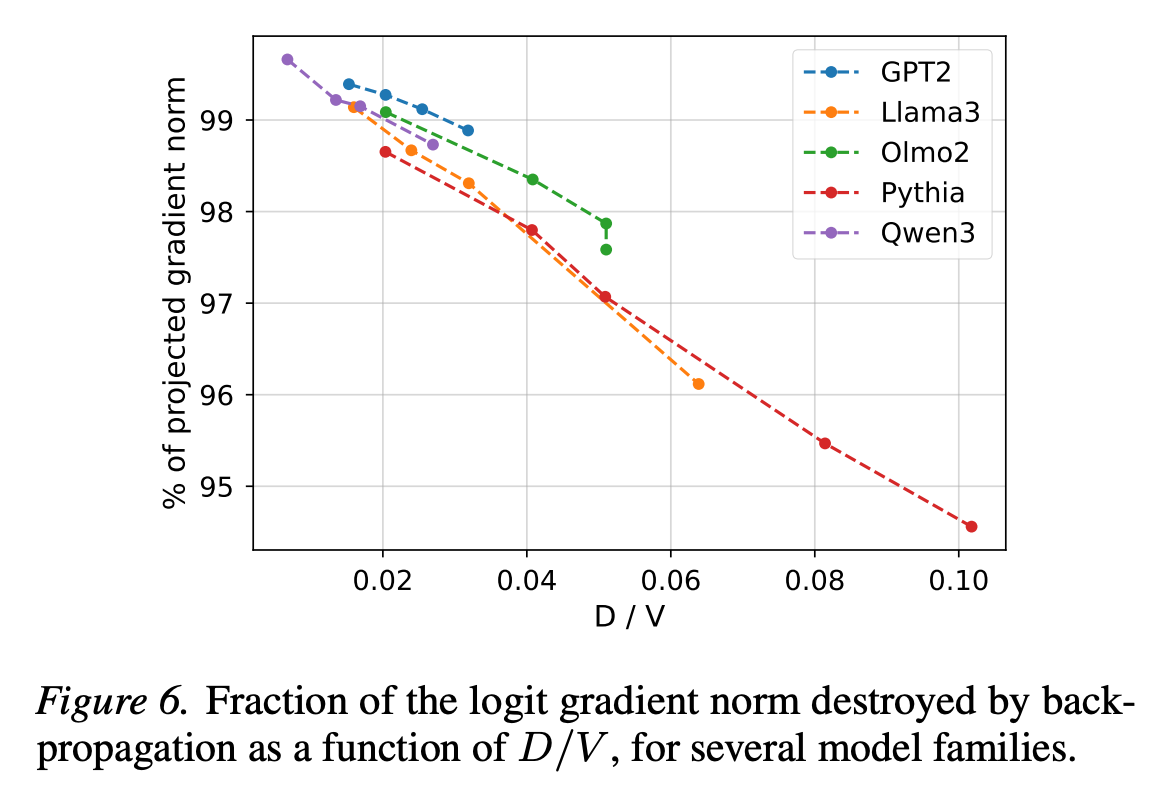

Lost in Backpropagation: The LM Head is a Gradient Bottleneck

“The need for new LM head designs”

“We show the softmax bottleneck is not only an expressivity bottleneck but also an optimization bottleneck. Backpropagating V -dimensional gradients through a rank-D linear layer induces unavoidable compression, which alters the training feedback provided to the vast majority of the parameters. We present a theoretical analysis of this phenomenon and measure empirically that 95-99% of the gradient norm is suppressed by the output layer, resulting in vastly suboptimal update directions.”

“We argue that this inherent flaw contributes to training inefficiencies at scale independently of the model architecture, and raises the need for new LM head designs.”

“We hypothesize that taking hidden dimensions into account when computing scaling laws (Hoffmann et al., 2022) could help refine their extrapolation quality. A more optimistic view of our work could also indicate that LMs have stronger potential than currently believed, and that convergence speed and/or performance gains could be obtained by better channeling the supervision signal through a better-suited logits prediction module.”

Godey, N., & Artzi, Y. (2026). Lost in Backpropagation: The LM Head is a Gradient Bottleneck. arXiv preprint arXiv:2603.10145.

https://arxiv.org/abs/2603.10145

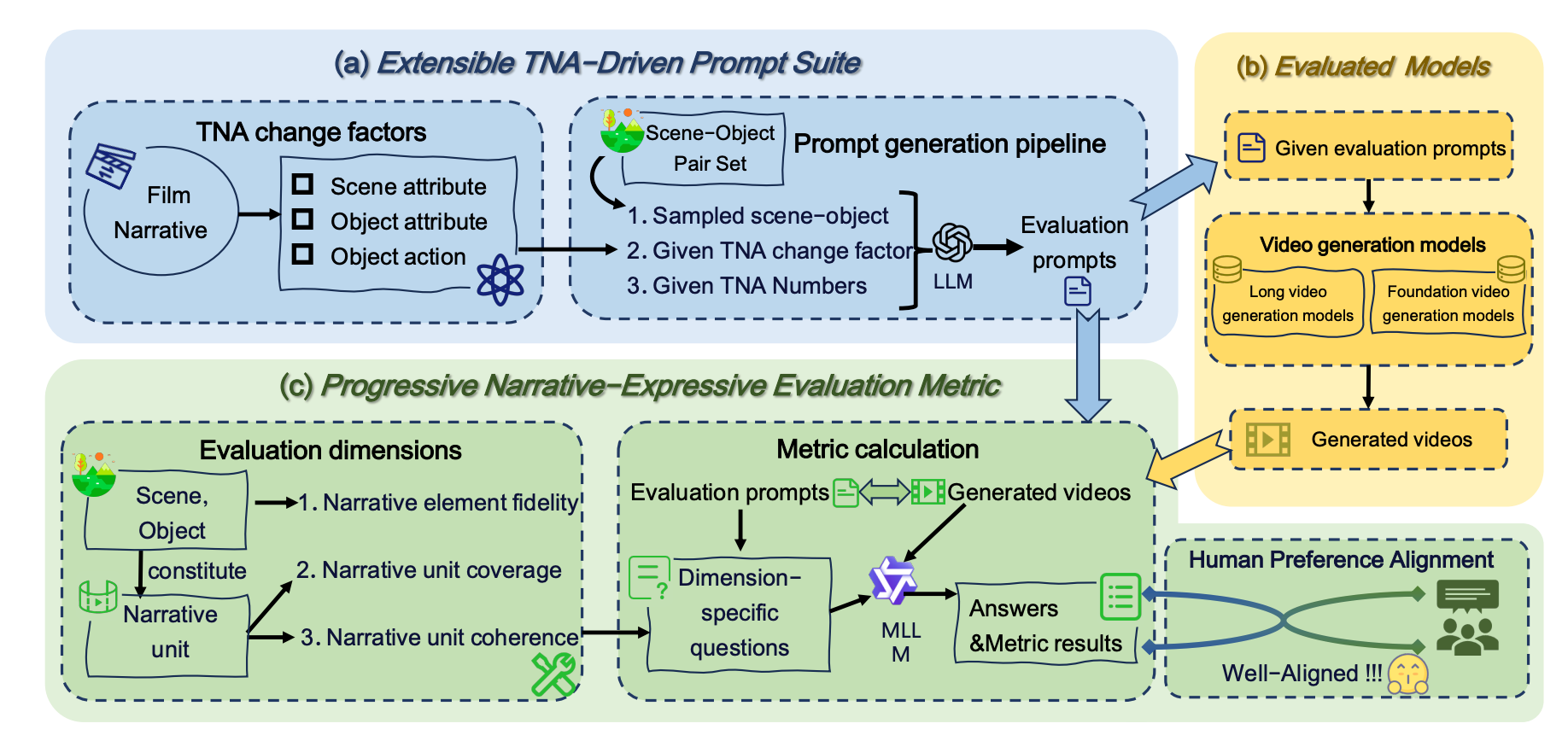

NarrLV: Towards a Comprehensive Narrative-Centric Evaluation for Long Video Generation

Temporal Narrative Atom … TNA for short. Oh producers are gonna love that.

“However, due to the lack of evaluation benchmarks specifically designed for long video generation models, the current assessment of these models primarily relies on benchmarks with simple narrative prompts (e.g., VBench). To the best of our knowledge, our proposed NarrLV is the first benchmark to comprehensively evaluate the Narrative expression capabilities of Long Video generation models. Inspired by film narrative theory, (i) we first introduce the basic narrative unit maintaining continuous visual presentation in videos as Temporal Narrative Atom (TNA), and use its count to quantitatively measure narrative richness.”

“Richer narrative semantics in text prompts weaken the model’s representation of narrative units, while its ability to represent basic elements remains”

“To systematically evaluate the narrative quality of long video generation, we introduce three core metrics—Narrative Element Fidelity, Narrative Unit Coverage, and Narrative Unit Coherence—grounded in audiovisual storytelling principles.”

Feng, X., Yu, H., Wu, M., Hu, S., Chen, J., Zhu, C., ... & Huang, K. (2025). NarrLV: Towards a Comprehensive Narrative-Centric Evaluation for Long Video Generation. arXiv preprint arXiv:2507.11245.

https://arxiv.org/abs/2507.11245

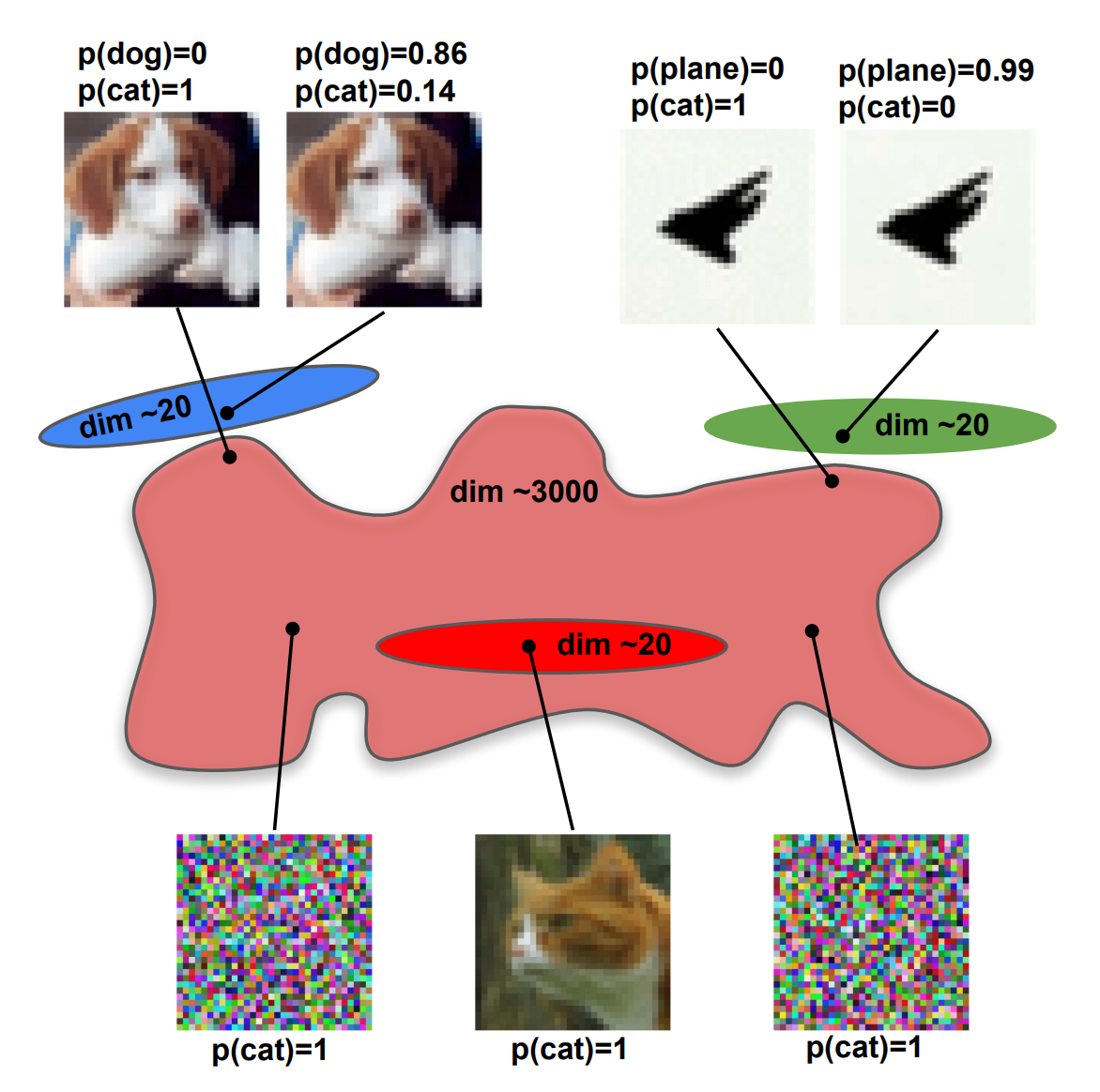

Solving adversarial examples requires solving exponential misalignment

Describes any organization

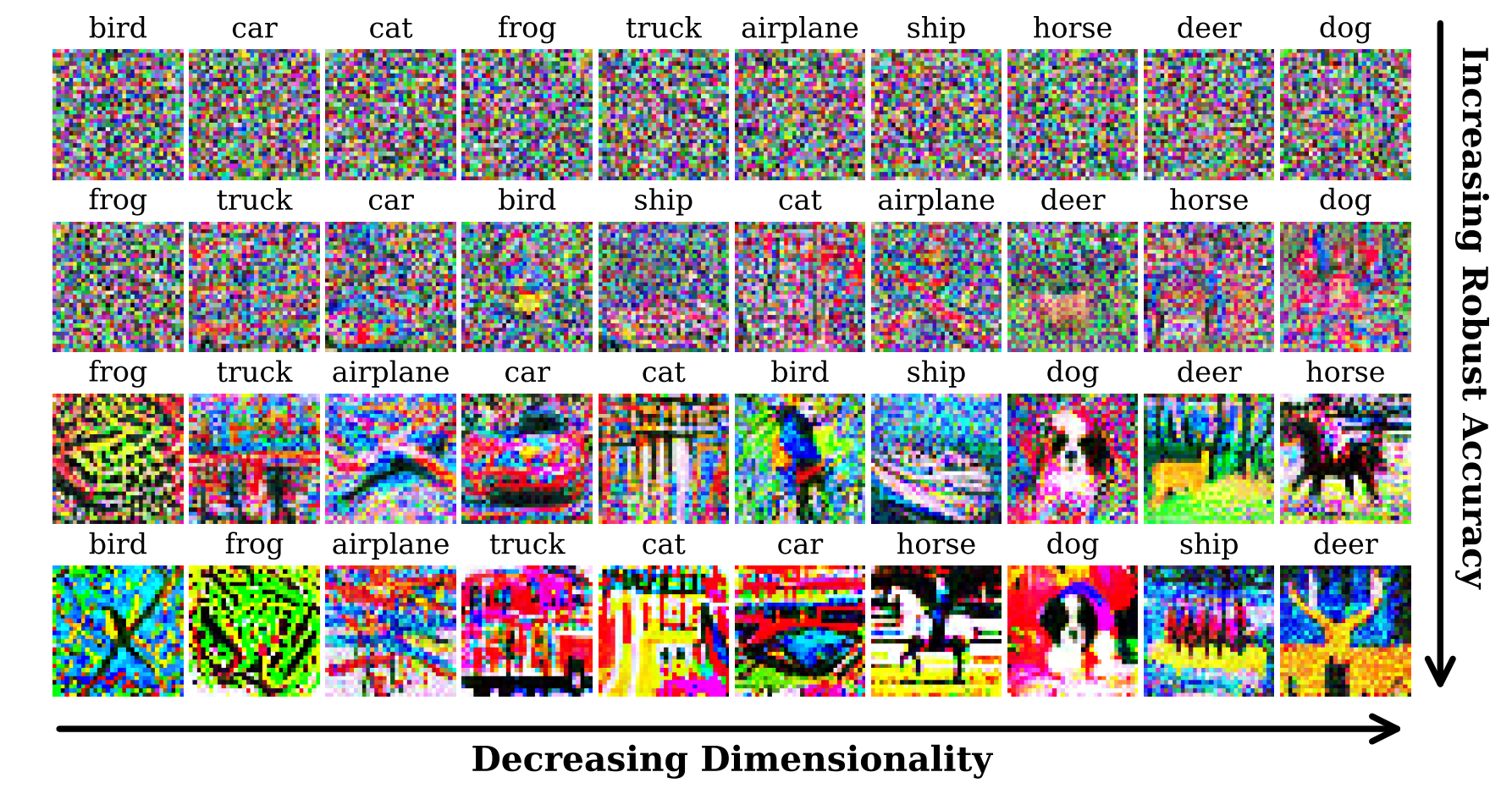

“To shed light, we define and analyze a network’s perceptual manifold (PM) for a class concept as the space of all inputs confidently assigned to that class by the network. We find, strikingly, that the dimensionalities of neural network PMs are orders of magnitude higher than those of natural human concepts. Since volume typically grows exponentially with dimension, this suggests exponential misalignment between machines and humans, with exponentially many inputs confidently assigned to concepts by machines but not humans.”

“This indicates that machine and human PMs for any concept are exponentially misaligned: there are exponentially many inputs confidently perceived as any given concept by machines, but not by humans (e.g. the two noise images in the network’s cat perceptual manifold). This exponential misalignment also explains the origin of adversarial examples: e.g. because the network’s cat PM fills up so much of image space, any other input (e.g. dog or airplane) is extremely close to it.”

“It would be interesting to extend our work beyond vision to language.”

Salvatore, A., Fort, S., & Ganguli, S. (2026). Solving adversarial examples requires solving exponential misalignment. arXiv preprint arXiv:2603.03507.

https://arxiv.org/abs/2603.03507

Thoughtworks Retreat

If code is crystalized experience, and code is truth, then who decides what is truth?

"We kept asking the same question in every room: if AI handles the code, where does the engineering actually go? Nobody had the same answer. But everybody agreed the question is urgent."

“A central insight was that an agent is more than its persona, goals or current context; it includes the history of work it has performed. While models are fungible within an agent (you can swap one LLM for another), changing a model fundamentally alters the agent's behavior and must be tracked. The work ledger emerged as the core primitive of this new operating system, analogous to a financial blockchain: searchable, auditable and enabling agents to discover and bid for work.”

Brain Like AGI

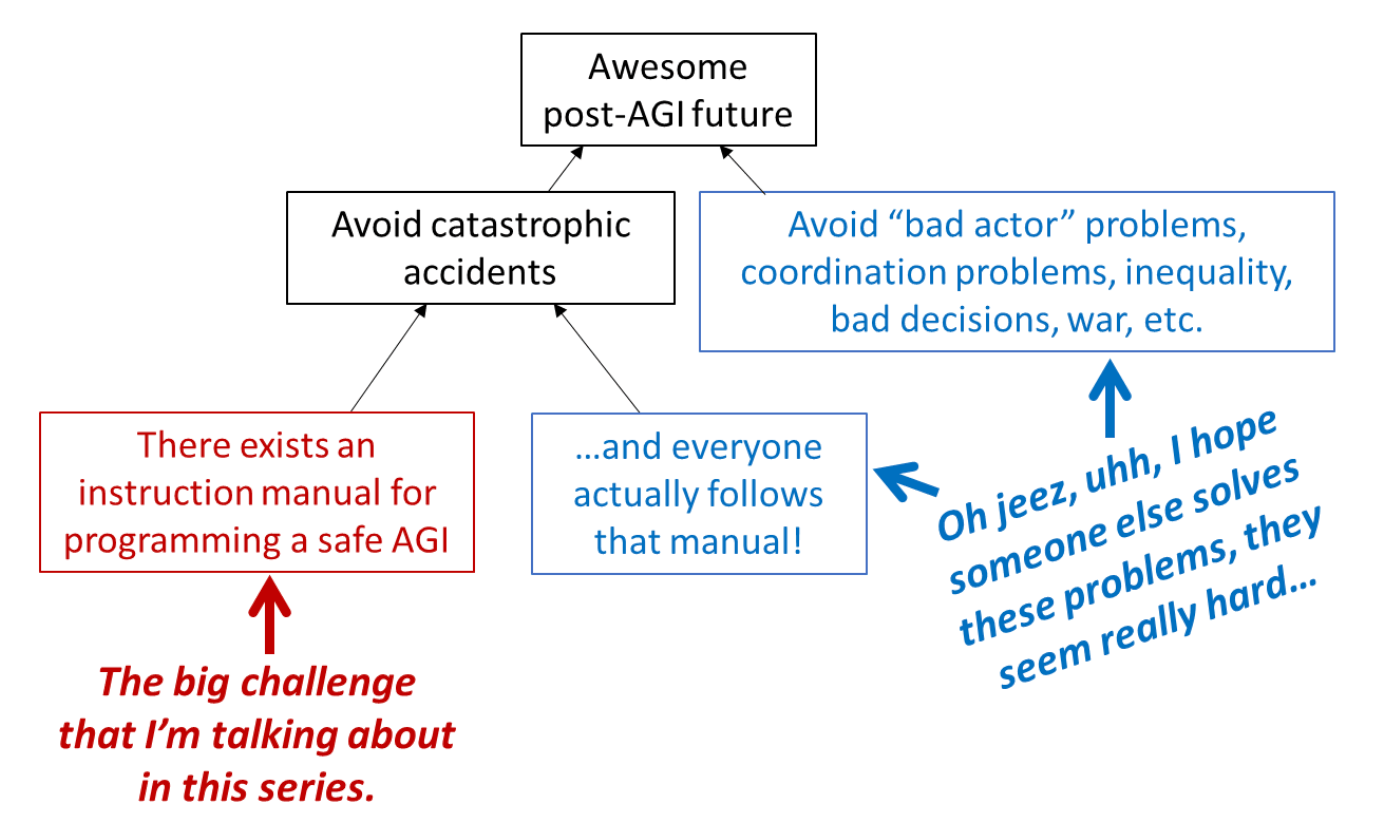

“There exists an instruction manual for programming a safe AGI”

“The blue boxes (see diagram above) also exist, and are absolutely essential, even if they’re out-of-scope for this particular series. The cause of the Chernobyl accident was not that nobody knew how to keep a nuclear chain reaction under control, but rather that best practices were not followed. In that case, all bets are off! Still, although we on the technical side can’t solve this noncompliance problem by ourselves, we can help on the margin, by developing best practices that are maximally idiot-proof, and minimally expensive.”

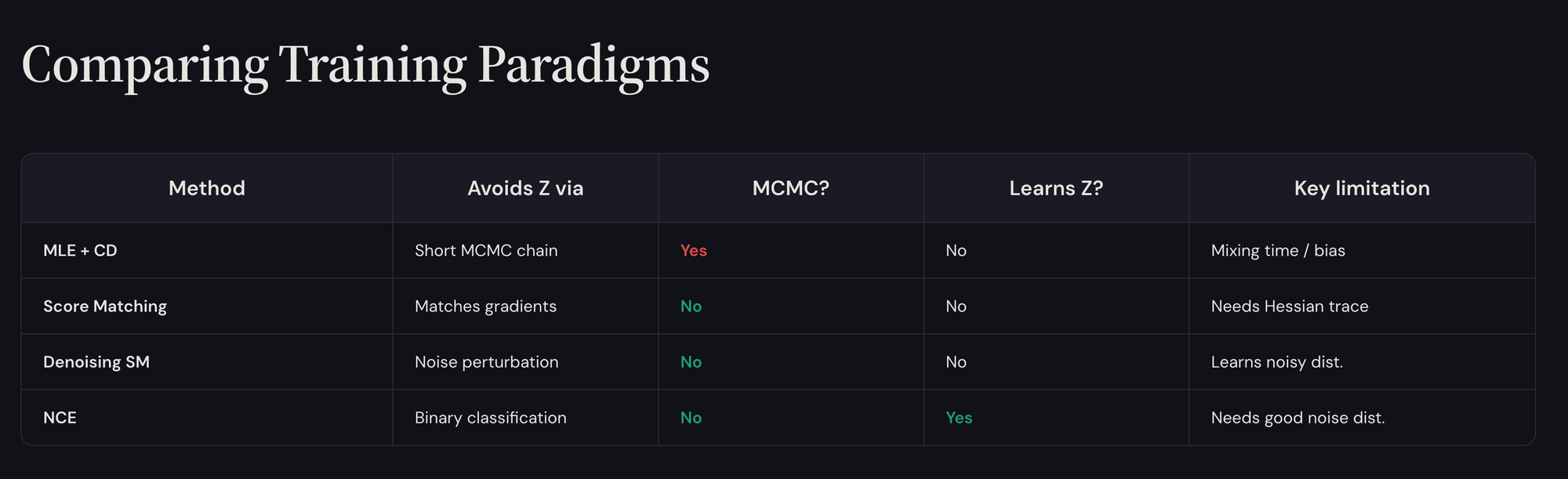

Energy Based Models

I remain open to it

Full presentation at:

https://www.shashankshekhar.com/static/talks/ebm.html

Absurdity Based Models

Reductio Ad Absurdaaaayummm

"Systems can persist in dysfunction indefinitely, and absurdity is not self-correcting.”

https://bsky.app/profile/beenwrekt.bsky.social/post/3mhe5susstc22

Reader Feedback

“How’s the median voter feeling about AI right now?”

Footnotes

There’s a lot of good stuff in that thoughtworks report.

These lines in particular:

“Technical debt is becoming cognitive debt: the gap between system complexity and human understanding.”

“Conway's Law applies to agents too. Enterprise architecture must now account for agent mobility, specialization, and drift.”

“If code changes faster than humans can review it, the traditional model of building mental models through code review breaks down.”

“One practitioner noted that code review has historically served as much as a learning mechanism as a quality gate.”

Plus, at an unrelated event, I heard somebody say that “documentation is always out of date and code is truth.”

The terms truth, understanding, drift and learning are important. A community defines itself based on what is knowledge and what is not. That includes knowledge of taboo and the rules that are designed to reduce upset. And it has rules about determining what is knowledge and what is not.

Most corporations, at scale, have loads of mechanisms that define what is knowledge and what is not, what can be said and what can’t, and how the presentation deck must always be on brand. It has creation myths and heroic epics. It has stories about why the fifth floor got the new colour copier and how office political apathy is destroying the office political system.

Swarms of agents. Teams of agents. Communities of agents. Whichever seafood inspired branding we’re to get next: such systems emit more data than people can absorb.

If you’re from analytics, statistics, or business intelligence, then you already know this. Cackle my friends. Cackle!

Decision automation was a response. And we’re here!

Most firms are organized to enshrine and reinforce knowledge. After all, where do we think bureaucracies come from? They don’t just spontaneously build themselves all by themselves one night. They are self-generative and as such, are self-reinforcing.

Perhaps you can see it again in that document? Perhaps the fingerprints of old patterns reasserting themselves?

Never miss a single issue

Be the first to know. Subscribe now to get the gatodo newsletter delivered straight to your inbox