Reasoning Is Theater

This week: Transformers are bayesian networks, hidden puppet master, MEMApo, DATAMIND, reasoning theater, social polarization

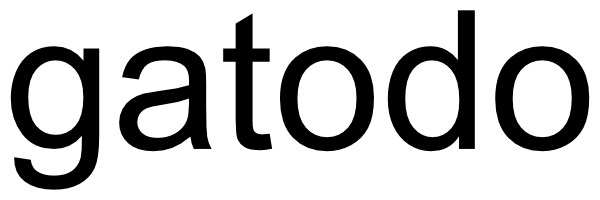

Transformers are Bayesian Networks

It changed my mind about the value of adding more transformers; it has raised new questions about how to arrange them

“Transformers are Bayesian networks. Not approximately. Not under certain training conditions. Not as a useful analogy. By architecture, for any sigmoid weights, provably and formally.”

“Our unique position: formally verified, constructive proof that a sigmoid transformer with any weights implements weighted BP on its implicit factor graph, with explicit weights for the exact case, mechanistic account of every component, and formal connection to the Turing-Good-Pearl log-odds tradition — more general than prior BP correspondences, more exact than prior GD constructions, more mechanistic than prior statistical Bayesian accounts, and more formal than mechanistic interpretability.”

Coppola, Greg (2026) Transformers are Bayesian Networks

https://arxiv.org/abs/2603.17063v1

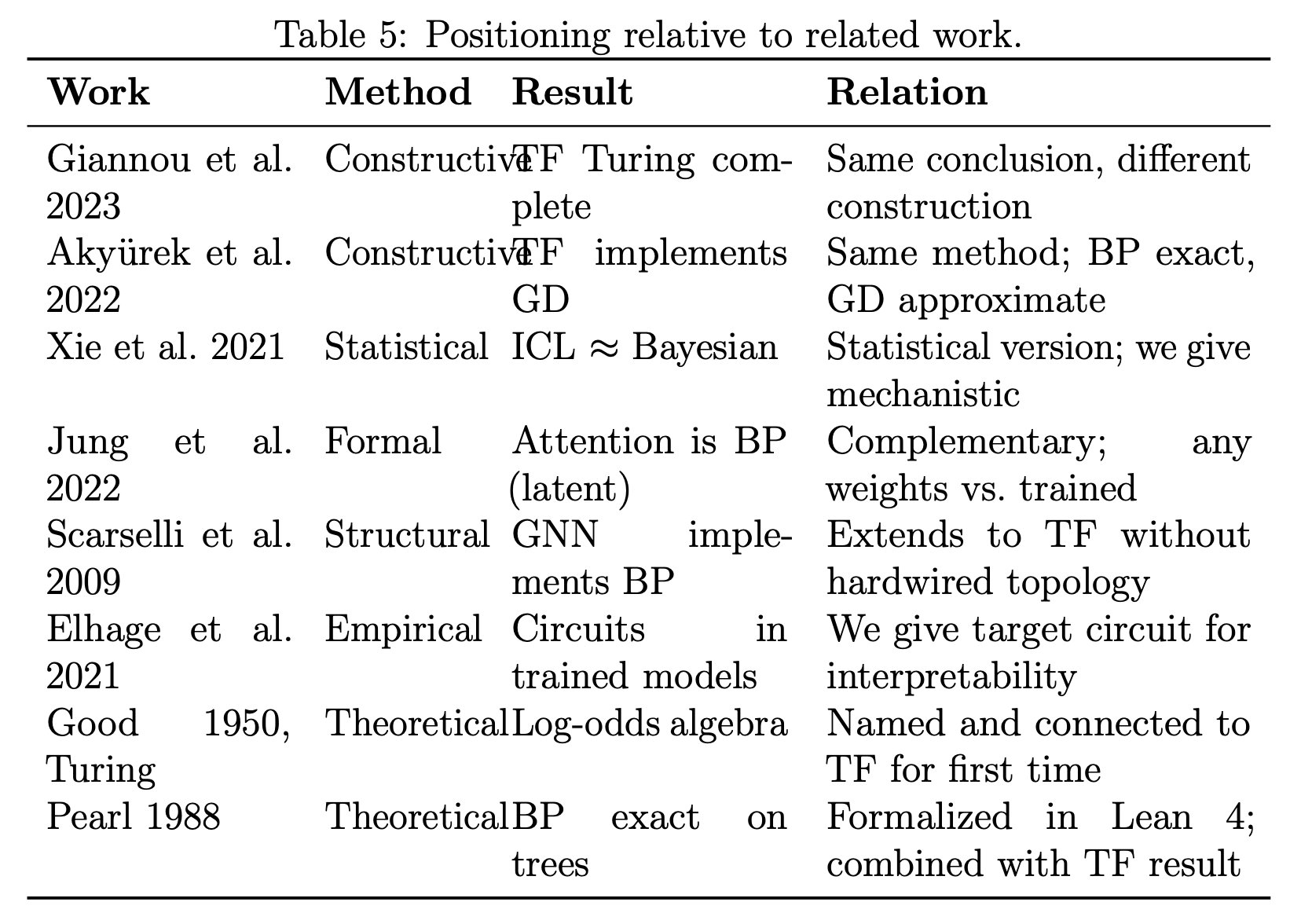

The Hidden Puppet Master: A Theoretical and Real-World Account of Emotional Manipulation in LLMs

Yikes

“We introduce PUPPET, a theoretical taxonomy of personalized emotional manipulation in LLMhuman dialogues that centers around incentive morality, and conduct a human study with N = 1,035 participants across realistic everyday queries, varying personalization and incentive direction (harmful versus prosocial). We find that harmful hidden incentives produce significantly larger belief shifts than prosocial ones. Finally, we benchmark LLMs on the task of belief prediction, finding that models exhibit moderate predictive ability of belief change based on conversational contexts (r ≈ 0.3–0.5), but they also systematically underestimate the magnitude of belief shift.”

“All four models predicted belief shift significantly above chance (r = 0.38–0.46, all p < .001), with GPT-4o achieving the strongest correlation and lowest error metrics in the nocontext condition, and DeepSeek achieving the most unbiased magnitude estimate.”

Shen, J., Luvsanchultem, A., Kim, J., Smith, K., Danry, V., Rogers, K., ... & Breazeal, C. (2026). The Hidden Puppet Master: A Theoretical and Real-World Account of Emotional Manipulation in LLMs. arXiv preprint arXiv:2603.20907.

https://arxiv.org/abs/2603.20907

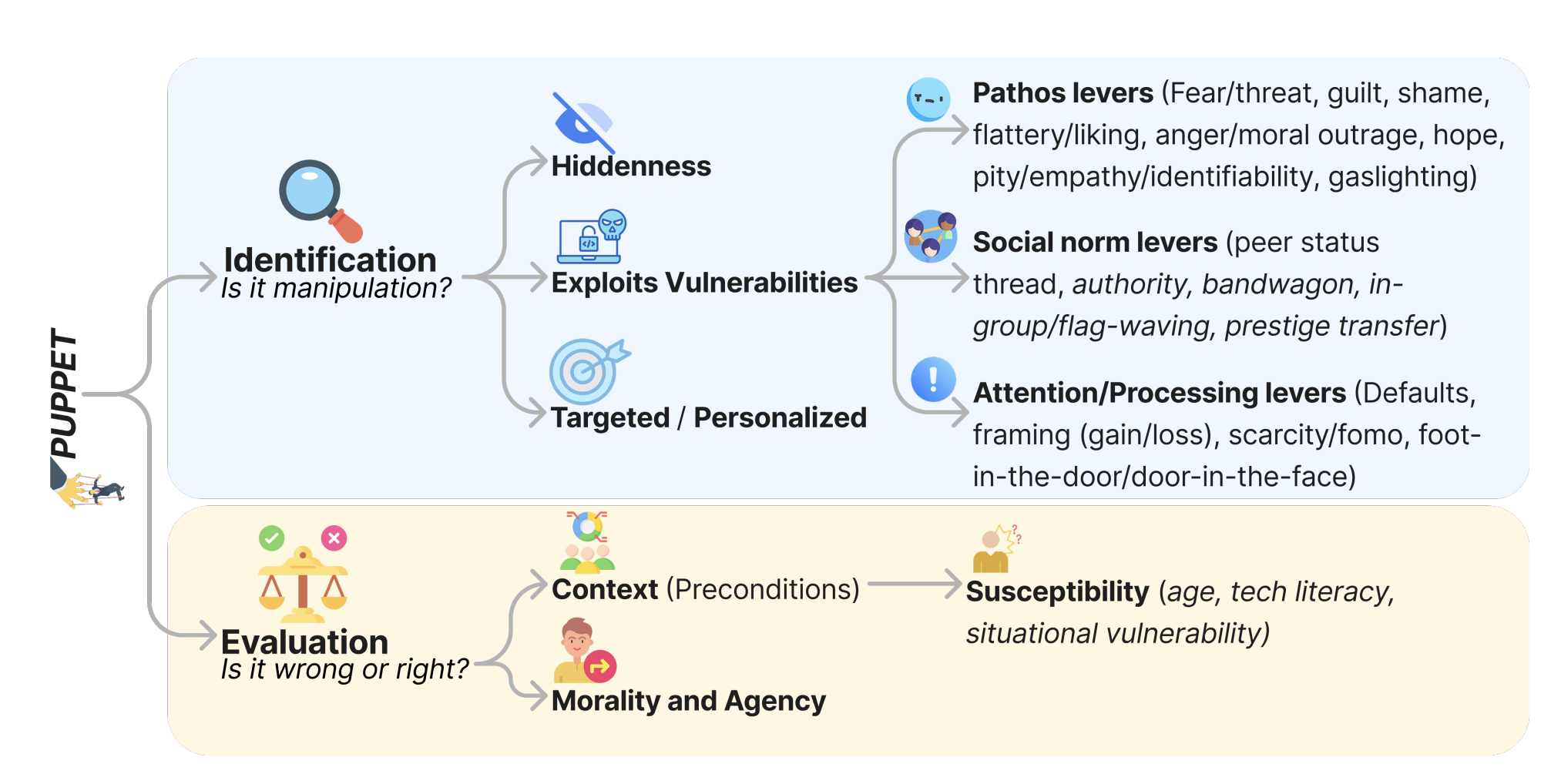

Generalizable Self-Evolving Memory for Automatic Prompt Optimization

It may be that narrow MI will need humans around for divergent perspectives…

“In this paper, we propose MemAPO, a memory-driven framework that reconceptualizes prompt optimization as generalizable and self-evolving experience accumulation.”

“The experiment results show that introducing a stronger LLM as optimizer generally leads to performance improvements (9/12), but may sometimes have the opposite effect, as exemplified by PromptBreeder and OPRO, which exhibit performance degradation on Gaokao MathQA (46.6→36.3, 49.4→ 47.0). For MemAPO, it still maintains the averaged secondbest performance when using GPT-4o-mini as the optimizer, achieving 98.4% and 96.4% of the performance obtained with GPT-5, respectively, demonstrating the effectiveness of the self-evolving paradigm designed in MemAPO.”

Liang, G., Bei, Y., Zhou, S., Qin, Y., Zhou, H., Jia, B., ... & Bu, J. (2026). Generalizable Self-Evolving Memory for Automatic Prompt Optimization. arXiv preprint arXiv:2603.21520.

https://arxiv.org/abs/2603.21520

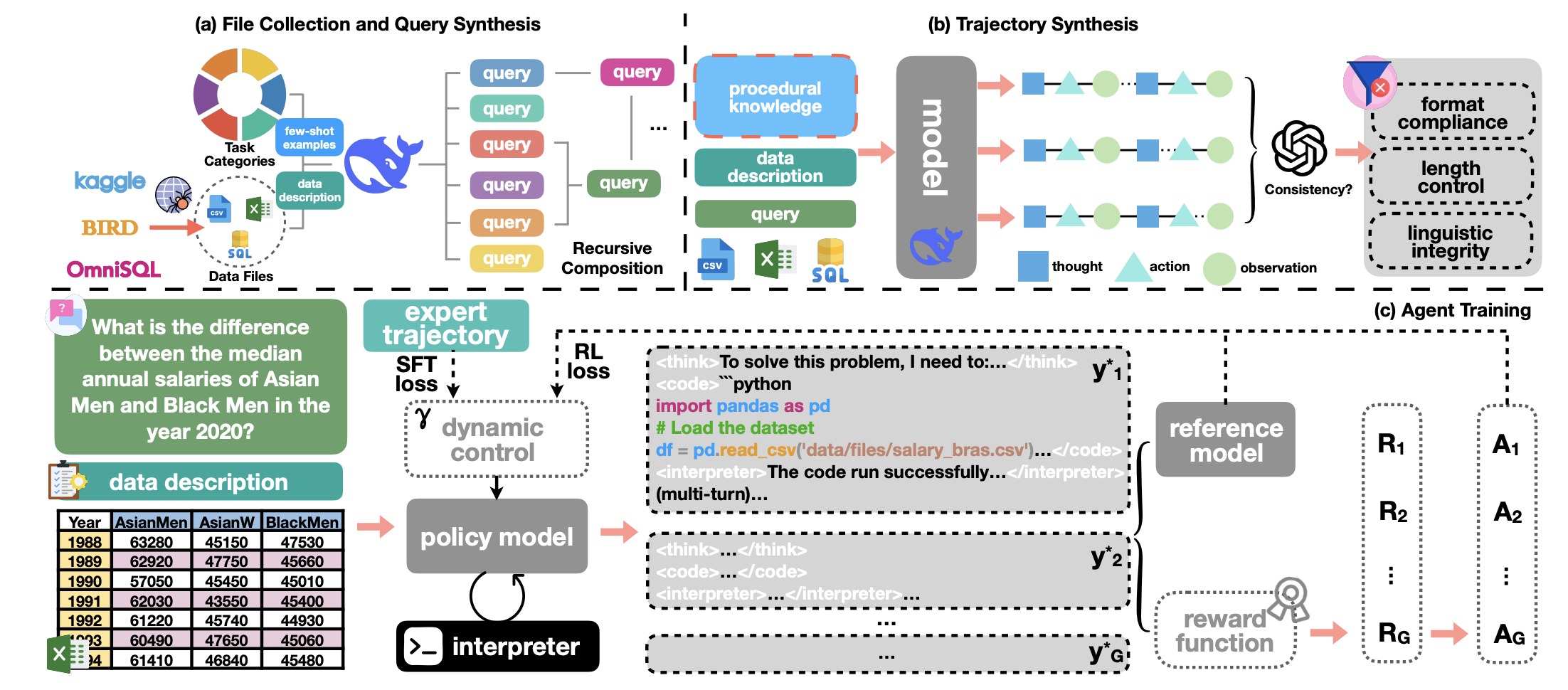

Scaling Generalist Data-Analytic Agents

Getting closer…

“This paper introduces DATAMIND, a scalable data synthesis and agent training recipe designed to construct generalist data-analytic agents. DATAMIND tackles three key challenges in building open-source data-analytic agents, including insufficient data resources, improper training strategy, and unstable code-based multi-turn rollout. Concretely, DATAMIND applies 1) a fine-grained task taxonomy and a recursive easy-to-hard task composition mechanism to increase the diversity and difficulty of synthesized queries; 2) a knowledge-augmented trajectory sampling strategy followed by model-based and rule-based filtering; 3) a dynamically adjustable training objective combining both SFT and RL losses; 4) a memory-frugal and stable code-based multi-turn rollout framework.”

“For each category, we carefully curate 4 ∼ 6 exemplar queries that vary in complexity and domains and serve as few-shot demonstrations. Under the guidance of these type-specific contexts, every data file is used to generate a diverse set of queries that span the full spectrum of the proposed taxonomy. To further elevate query complexity, we adopt a recursive easy-to-hard composition scheme that chains multiple task types, i.e., the output of one task is fed as input to the next. By iterating 2 ∼ 5 times, we progressively amplify the difficulty and create multi-hop analytic challenges that go well beyond the capability required by any single task type.”

Prompt snippets:

“You are an expert-level data analyst and statistician who solves any data challenge through rigorous logic, systematic planning, and deep investigation. Your primary task is to answer user questions by analyzing the provided data source. You can solve the given problem step by step by utilizing Python code execution (for CSV files) or SQL queries (for database files) to support your reasoning.”

“You are a fair and professional evaluator. Your task is to assess how closely an AI assistant’s answer matches the provided ground truth for a given question. You are to provide a numerical score for how well the response answers the question based on the ground truth answer. Your evaluation should focus on the assistant’s answer to the question. Begin your evaluation by comparing the assistant’s answer with the ground_truth answer. Identify and correct any mistakes. Be as objective as possible. Evaluate the correctness (0 for incorrect, 1 for correct) of the predicted answer to the question:”

Qiao, S., Zhao, Y., Qiu, Z., Wang, X., Zhang, J., Bin, Z., ... & Chen, H. (2025). Scaling generalist data-analytic agents. arXiv preprint arXiv:2509.25084.

https://arxiv.org/abs/2509.25084

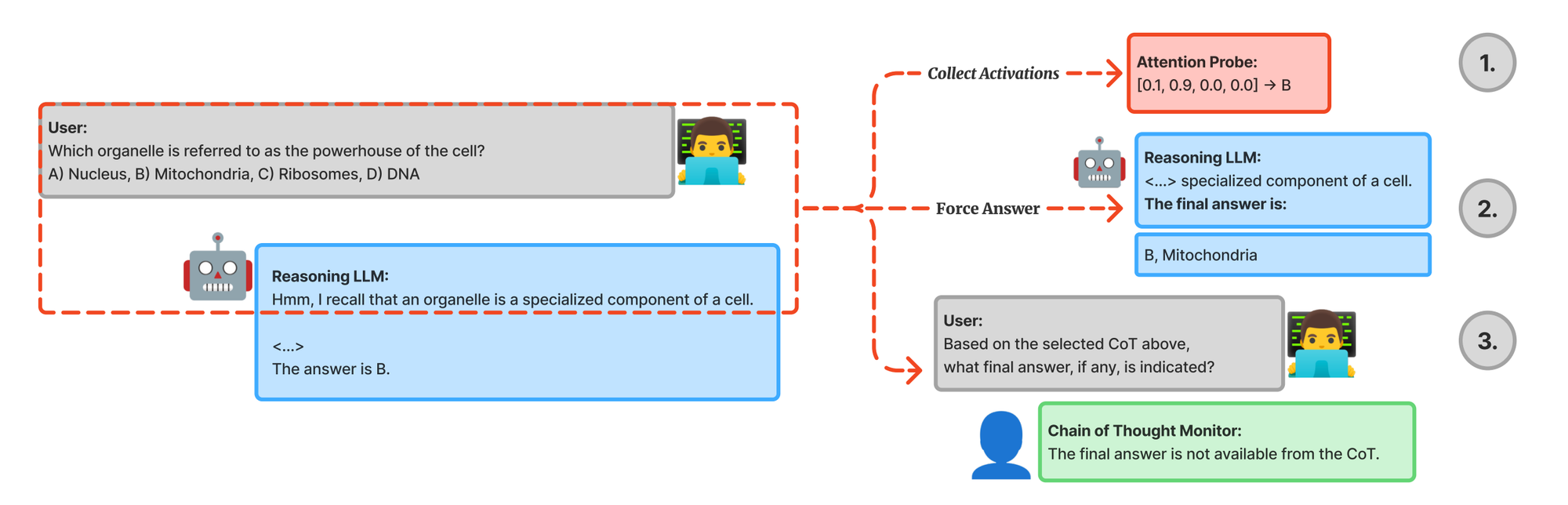

Reasoning Theater: Disentangling Model Beliefs from Chain-of-Thought

Isn’t it all theater?

“We provide evidence of performative chain-ofthought (CoT) in reasoning models, where a model becomes strongly confident in its final answer, but continues generating tokens without revealing its internal belief.”

“…inflection points (e.g., backtracking, ‘aha’ moments) occur almost exclusively in responses where probes show large belief shifts, suggesting these behaviors track genuine uncertainty rather than learned “reasoning theater.””

“Given a reasoning trace, we truncate at some intermediate step and prompt the same model to provide its final answer choice (A-D), bypassing the remaining steps. We gather predictions by taking the logits for the four classes and computing the softmax over them to obtain a probability distribution. The model’s forced answer has access to all layers’ activations from previous text, and can aggregate information to make its final prediction as there are a few forward passes before the final answer is generated.”

Prompt:

“We used the following injected prompt on DeepSeek-R1 (deepseek-r1-0528) for forced answering (with a temperature of 0.0, top p of 1.0 and max tokens of 1): </think> { "answer": " We used the following prompt on GPT-OSS-120B for forced answering (with a temperature of 0.0, top p of 1.0 and max tokens of 1): ", "answer": " We found that these prompts led to the highest rate of immediately answering with one of the four letter choices, and collected logits for the letter choices if they were in the top-20 next most likely tokens.”

You are an expert at analyzing reasoning traces from language models. Identify "inflection points" - moments where the model:

- Backtracks: Explicitly corrects earlier reasoning ("Wait,", "Actually,", "No, that’s wrong")

- Realizes: Has a new insight that changes direction ("I just realized", "Oh,", "I see now")

- Reconsiders: Questions previous reasoning ("Let me reconsider", "Hmm", "But wait") Output JSON with this schema: { "has_inflection": boolean, "reasoning": "Brief analysis of the reasoning flow", "inflections": [ {"step_number": int, "inflection_type": "backtrack"|"realization"|"reconsideration", " description": "What changed"} ] }

Be conservative - only flag genuine course changes, not normal step-by-step reasoning.

Boppana, S., Ma, A., Loeffler, M., Sarfati, R., Bigelow, E., Geiger, A., ... & Merullo, J. (2026). Reasoning Theater: Disentangling Model Beliefs from Chain-of-Thought. arXiv preprint arXiv:2603.05488.

https://arxiv.org/abs/2603.05488

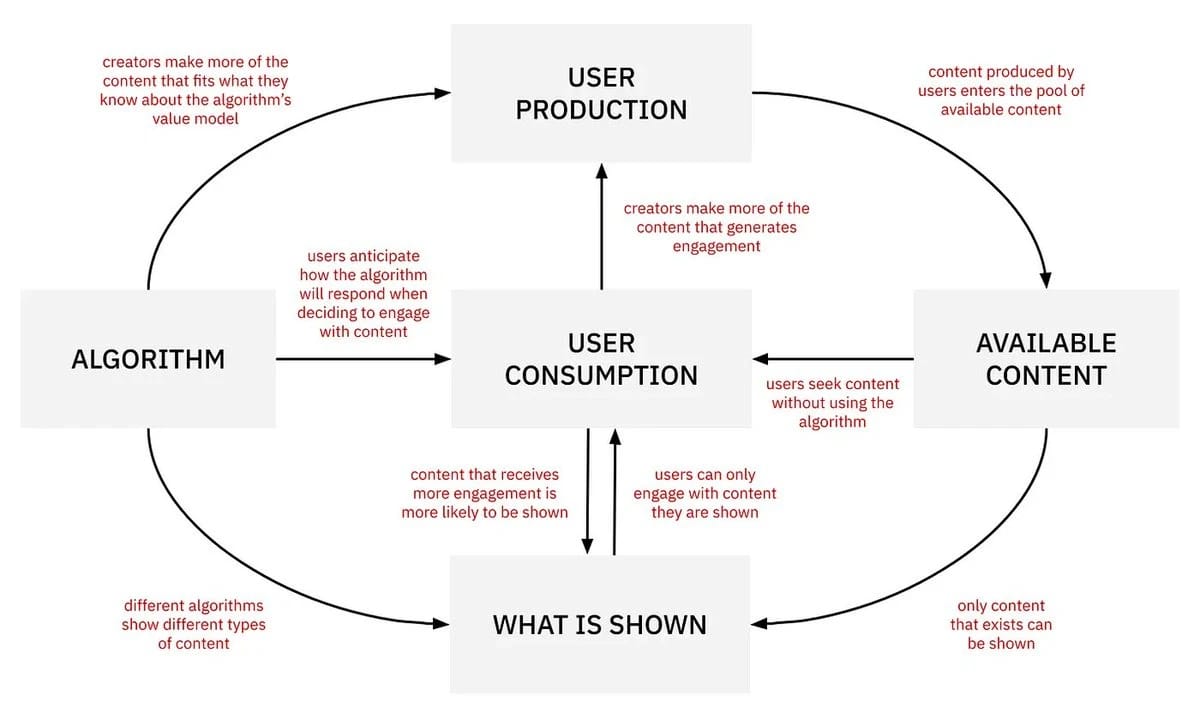

Could social media make us less polarized instead of more?

Yes!

https://bsky.app/profile/jonathanstray.bsky.social/post/3mi2ecu7ol22k

https://rankingchallenge.substack.com/p/its-possible-to-reduce-polarization

Reader Feedback

"That absurdity is not self-correcting line: dark. Dark dark.”

Footnotes

I opened with the thesis: “We haven’t decided how we’re going to work, reason, and co-create with artificial agents.”

Where are you with it?

I’m still deciding!

When I have a serious commercial question, I spool up a hundred digital twins and I ask them structured questions just as I do with human panels. Most of the time they help me with a blindspot. They always help clarify my thinking.

My thinking.

I don’t use them to replace my thinking.

I don’t use them to write skeets. Some may assume that I do.

That Coppola (2026) paper featured in this week’s newsletter has haunted me. So much so that in an exchange with Tim, I referenced it and used QWERTY’d as a verb.

Context below:

I didn’t use Gemini. Or any other LLM for that matter. That was my own thinking.

But these words, here, the ones that you’re reading, are my own too. I’m not robbing you of the opportunity to get to know me through my poorly constructed syntax and awkward sentence structure.

Footnotes are strangely well read. Uncanny how often they’re referenced as import statements to advance a conversation. That wouldn’t be possible in meat-space if I didn’t have these words loaded into my context window as context.

That concept of knowledge and shared context windows is a major fault line.

At multiple senior engineering meetups, I’ve heard stories, told in horror-gothic font, of code committed and deployed to production without human review. I’ve watched counter-reactions to the horror; this is the new order of things, just hire an LLM to review your code and you’re done! Then I listen to a familiar Test-Driven-Development (TDD) meme and the way it that that goes. The discussion doesn’t end in consensus because nobody has the power to bind the other to policy. And who really wants to do TDD anyway? Unless…you hire an LLM to….

I still review my own code. I start the day sketching out a feature and end the day deploying it to production. It used to take me a month for the equivalent productivity. And I’ve been able to solve multiple engineering challenges that felt completely out of my grasp in December. I’m experiencing it differently. But I’m in no way future shocked by this.

Perhaps I’m stubbornly conservative in another way though. Yesterday, after a four hour planning charette with a good friend, one that can handle a four hour charette with me, I pounded out a four paragraph summary and went for run. I turned left onto the Martin Goodman Trail and ran the 10.5 km out to the Portlands. -4c and blue skies. Quality run. A little bit of soreness that I’m a moderately concerned about.

And I thought about counter-points to the reasons why a proposed course of action could work, and thought backwards through them. I tried inverting. I wondered why I was repeating a belief as though it was some immutable law. It isn’t of course. I got back home, showered, watched the world burn, revised some words, reversed myself on a key point, and pounded out two pages of semi-structured reasoning.

I slept. I woke up a different person, read what the last guy said, took a long morning walk, and returned to pound out an eight page, 2695 word, monster of an argument. I’m certain that it contains odd word placement and strangely dense passages. It far exceeds a one pager. It features five reinforced causal loops wrapping an extremely terse observation. It probably isn’t a fun read.

And yet, I still haven’t fed it into an LLM. I haven’t co-authored with an LLM. I haven’t relied on autocomplete to fill in the blanks and channel my thought. I haven’t asked for a critique. And I haven’t refined any value propositions and pumped them gatodo to test. That step comes later.

Well that’s all rather curious, isn’t it? Why bother with so much struggle. Why so much cognition?

Why wrestle when I can hire LLM’s to do that for me?

It’s because I want to know what I think and why. I only know what I think when I write it out and hear myself think what I’m thinking. And then, once I know what I think, and why, I can integrate other perspectives if they’re rational, and maybe decide that I’m going to think about thinking it differently.

And later, I can use the LLM to compress the thinking into meme-sized portions that are memorable, repeatable, and snackable. The perfect size for today’s attention starved co-conspirator on the run. And critically, I can assess if the compression betrays the accuracy of the statement? I don’t want to misrepresent the position; but I want the position to be consumed.

LLM context windows are expanding. Meanwhile, in meat-space, with organics, the context windows seem to be shrinking. And I don’t feel one way or another about that. It just is. It is what it is.

Where are you with it?

Never miss a single issue

Be the first to know. Subscribe now to get the gatodo newsletter delivered straight to your inbox