Scarcity Still Prevails

This week: What will be scarce, emergent coordination, autonomous long-horizon engineering, FreezeEmpath, decoupling chain of thought, ReMe

What Will Be Scarce?

And…some of us will still want to work with each other… … ?

“Economics is the study of decision-making under constraints, i.e., scarcity. If advanced AI brings material abundance—if machines can produce many if not all forms of human production at very low marginal cost—does economics become irrelevant? No, we will still have scarcity, but the kind of scarcity that matters will change. Ultimately the answer to any question about the future economics of advanced AI begins with identifying what becomes scarce. After answering that question, the rest of the analysis is pretty straightforward. In this essay I’m going to explore what becomes scarce when automation can replicate many if not all human production, and what that may mean for the types of jobs that emerge.”

“I want to consider a different scenario, one where automation can replicate human production and the commodities that it produces (a big if!!!), but human labor does not disappear. How could this be the case? A lot of analysis takes the economy as given: there is a set of jobs and a set of goods/services produced by the economy. If the same set of goods/services can be produced by cheaper machines, then these machines replace humans and the jobs disappear. But the economics of structural change, combined with deep-seated features of human preferences, suggests something different: as people get richer, they don’t just want more commodities. They want things that aren’t commodities in the standard sense of the word. The social aspects of products such as the relationships, the status, and exclusivity—what Rene Girard called the mimetic properties of desire—become much more relevant once people’s basic needs are satisfied. And the demand for these properties will bring the human element back into the production process, and with it, the jobs.”

“It will trigger the emergence of something new: a post-commodity economy, where a growing share of expenditure goes toward goods and services whose value is inseparable from the human who provided them. The same economic forces that moved 40% of the American workforce off farms and into factories and offices will move workers out of automatable commodity production and into what I’ll call the relational sector. By this I mean the human-intensive, provenance-rich, sometimes artisanal part of the economy where the human aspect is part of the value of the good or service itself. The economics of scarcity won’t disappear, it’ll just relocate.”

“The claim is about sectoral reallocation in rich economies: as AI makes commodity production cheap, spending and employment shift toward high-income-elasticity sectors where human involvement still carries value. In other words, labor share can fall and the relational sector can still remain a substantial part of the economy. But importantly, the inherent properties of demand for the relational sector also ensure that labor remains a substantial share of the overall economy (i.e., it will not shrink to zero).”

“René Girard called it mimetic desire: the idea that we don’t desire objects only for their intrinsic properties, but because other people desire them as well. We want what others want, and we want it even more when they can’t have it—for status, social capital, reputation, etc. Desire is not just a relationship between a person and an object; it is also a function of what other people desires.”

“Finally, the category of human goods is much broader than artists and authenticity goods. Education, care, hospitality, therapy, and various local services are, for reasons outlined elsewhere in this essay, categories where the value of the service is likely to be increasingly linked to the human providing them. The BLS Consumer Expenditure Survey shows that households in the top income quintile spent significantly more on these more relational categories than lower-income consumers, and even now these sectors are not small parts of the economy---together, they employ nearly 50 million people in the US. This gives some credence to the claim that the relational sector will be a substantial overall share of the economy post-AGI.”

https://aleximas.substack.com/p/what-will-be-scarce

Emergent Coordination in Multi-Agent Language Models

Finally: a metric

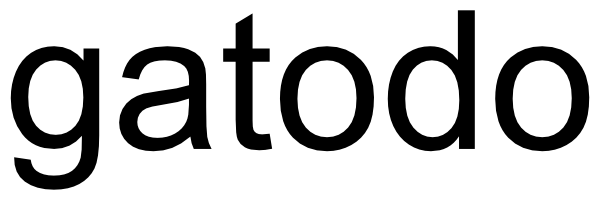

“Despite impressive performance of many multi-agent systems we do not yet have a principled understanding when and how such synergy arises, what role agent differentiation plays, and how to steer it systematically.”

“Determining whether multi-agent systems function as genuine collectives—rather than merely aggregating agents—requires a principled measure of synergy. We follow a purely data-driven approach to assess whether these systems exhibit higher-order synergy, characterized by structural coupling and joint information about future states and task outcomes, as evidence of emergent collective behavior. Intuitively, synergy refers to information about a target that a collection of variables provide only jointly but not individually (Rosas et al., 2020; Humphreys, 1997).”

“We note that evidence of higher-order synergy should not be interpreted as implying sophisticated cognition or consciousness. As conceptualized here, synergy is a structural property of part-whole relationships within multi-agent interactions. Such higher-order structures are known to arise in simple systems, including agents governed by reinforcement learning (Fulker et al., 2024) or via basic nonlinearities in contagion processes (Iacopini et al., 2019; Lee et al., 2025). Without attributing human-like cognition to the agents, the patterns of interaction we observe mirror well-established principles of collective intelligence in human groups (Riedl et al., 2021; DeChurch & MesmerMagnus, 2010): effective multi-agent performance requires both alignment on shared objectives and complementary contributions across members.”

Riedl, C. (2025). Emergent coordination in multi-agent language models. arXiv preprint arXiv:2510.05174.

https://arxiv.org/abs/2510.05174

Toward Autonomous Long-Horizon Engineering for ML Research

Science branches (?)

“Automating scientific research has emerged as one of the most ambitious goals in artificial intelligence. In the context of AI and machine learning, progress on this front could substantially accelerate the pace of scientific discovery, improve reproducibility, and broaden access to high-quality research workflows.”

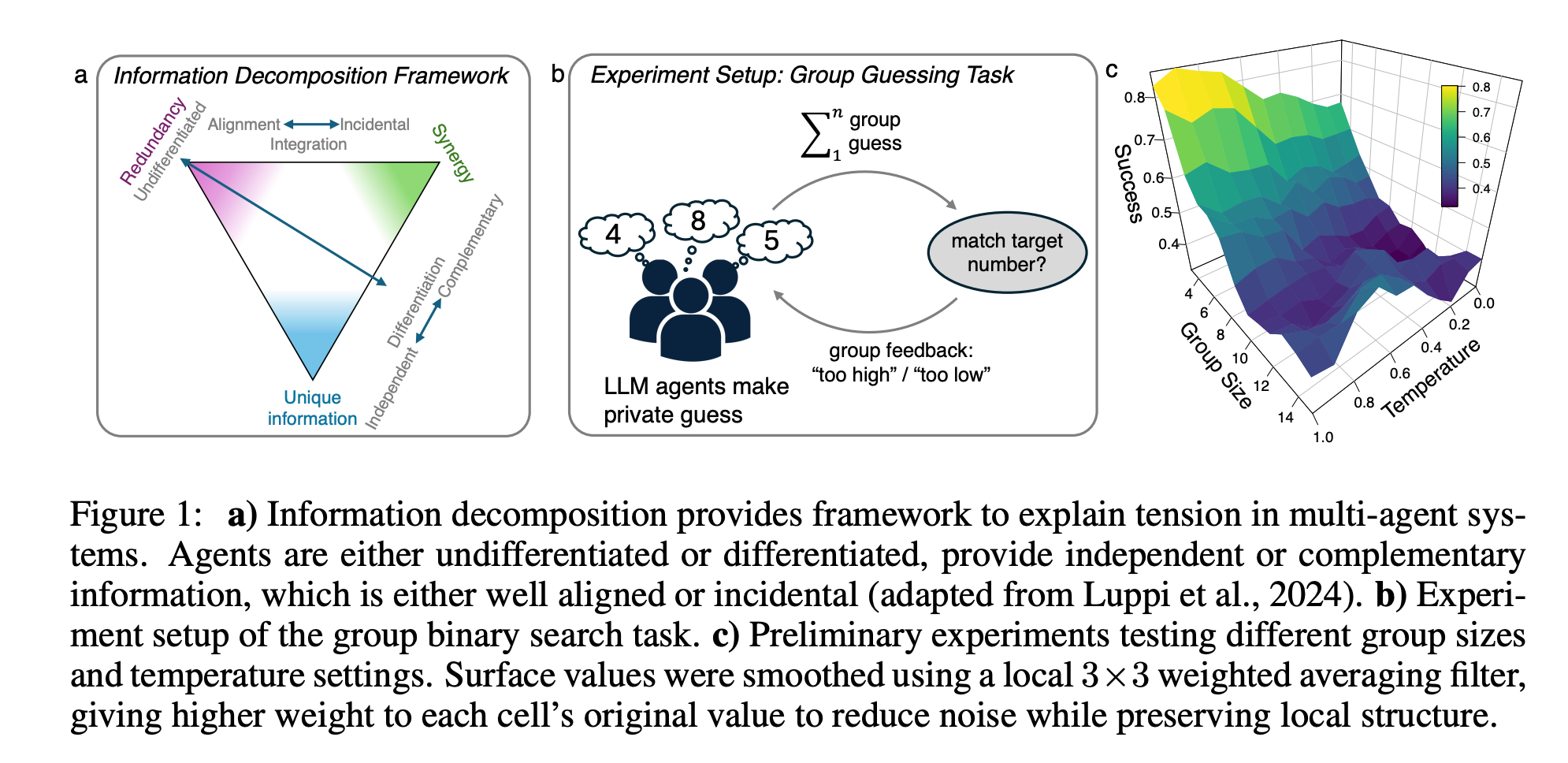

“Within this broader agenda, we focus on a more operationally demanding setting: autonomous long-horizon engineering for ML research. In this setting, an agent must own the end-to-end technical work of building, running, and iteratively improving ML research systems over hours or days. This includes turning papers or other research specifications into executable implementations, setting up environments and resources, running experiments, diagnosing failures, and refining the system toward reliable empirical outcomes. We refer to this setting as machine learning research engineering.”

“Our central design principle is to treat long-horizon performance as a joint problem of orchestration and state continuity: agents must not only coordinate work across heterogeneous stages, but also preserve evolving project state with enough fidelity for later decisions to remain coherent over time. For orchestration, AiScientist uses a hierarchical research team in which a top level Orchestrator manages stage-level planning and iterative delegation to specialized agents for paper comprehension, task prioritization, implementation, and experimentation, which may further spawn focused subagents when needed. To support state continuity, AiScientist instantiates a Fileas-Bus protocol in which agents coordinate through evolved files in a permission-scoped shared workspace rather than repeatedly compressing project state into lossy conversational handoffs. This design yields thin control over thick state: the orchestrator operates on concise stage-level summaries and a compact workspace map to keep control lightweight, while detailed analyses, code, and experiment records persist as durable artifacts that downstream agents can repeatedly re-ground on throughout multi-day implementation-and-debugging loops.”

Chen, G., Chen, J., Chen, L., Zhao, J., Meng, F., Zhao, W. X., ... & Jia, K. (2026). Toward Autonomous Long-Horizon Engineering for ML Research. arXiv preprint arXiv:2604.13018.

https://arxiv.org/abs/2604.13018

FreezeEmpath: Efficient Training for Empathetic Spoken Chatbots with Frozen LLMs

What is scarce?

“The most significant challenge lies in the scarcity of empathetic speech instruction data.”

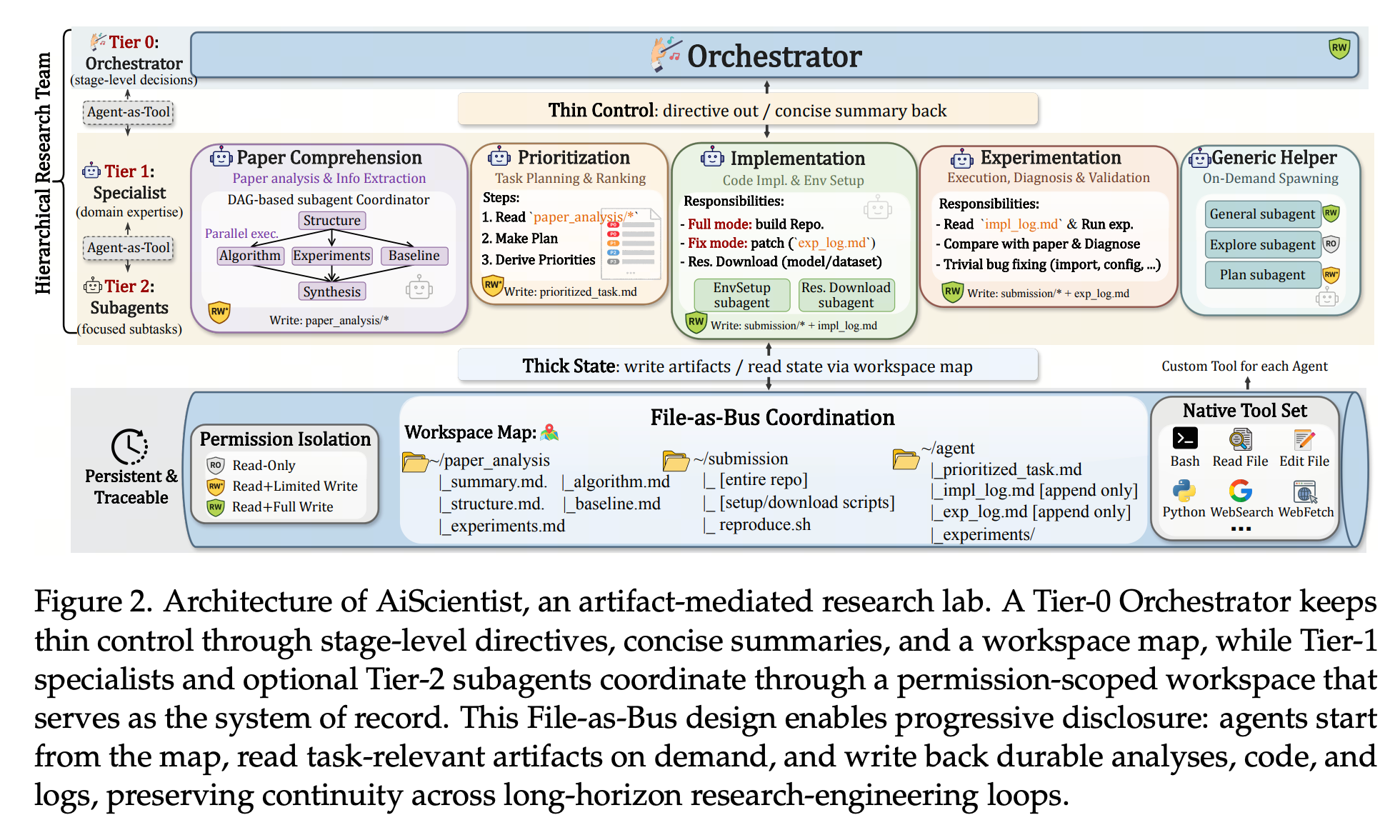

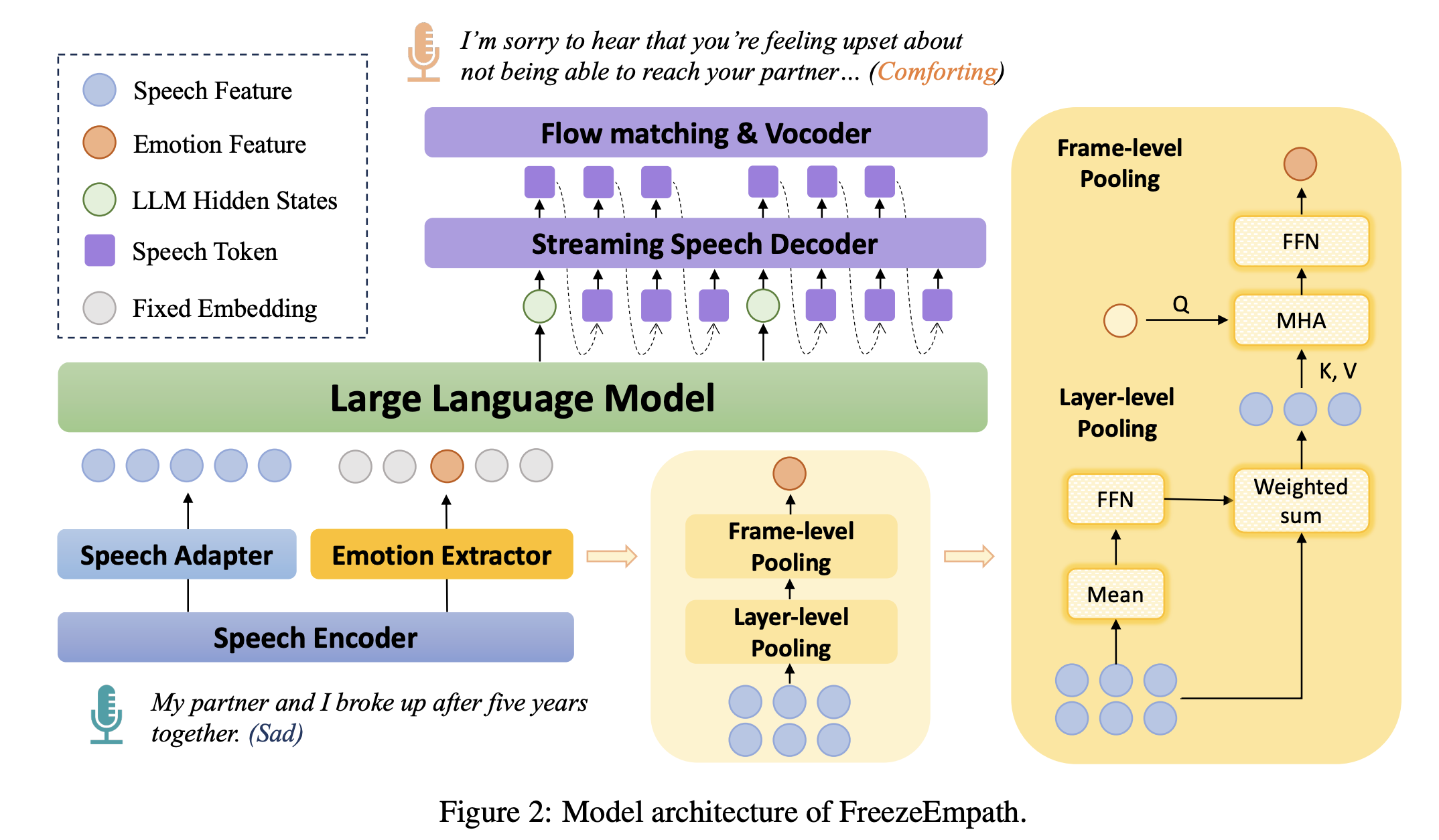

“The key insight of our method is that LLMs already possess inherent empathetic capability. If the frozen LLM is explicitly provided with the emotional tone of the speech, it can naturally generate a high-quality empathetic response, as shown in Figure 1.”

“In this paper, we propose FreezeEmpath, an empathetic spoken chatbot trained efficiently. The entire training process relies solely on existing neutral speech instruction data and SER data, while keeping the LLM’s parameters frozen. Experiments demonstrate that FreezeEmpath achieves strong results on several speech tasks, including empathetic dialogue, speech emotion recognition, and spoken question answering, demonstrating the effectiveness and efficiency of our method.”

Hong, Yun., Zhou, Yan.., Feng, Yang. (2026) FreezeEmpath: Efficient Training for Empathetic Spoken Chatbots with Frozen LLMs

https://arxiv.org/abs/2604.18159

Decoupling the effect of chain-of-thought reasoning: A human label variation perspective

Chunky

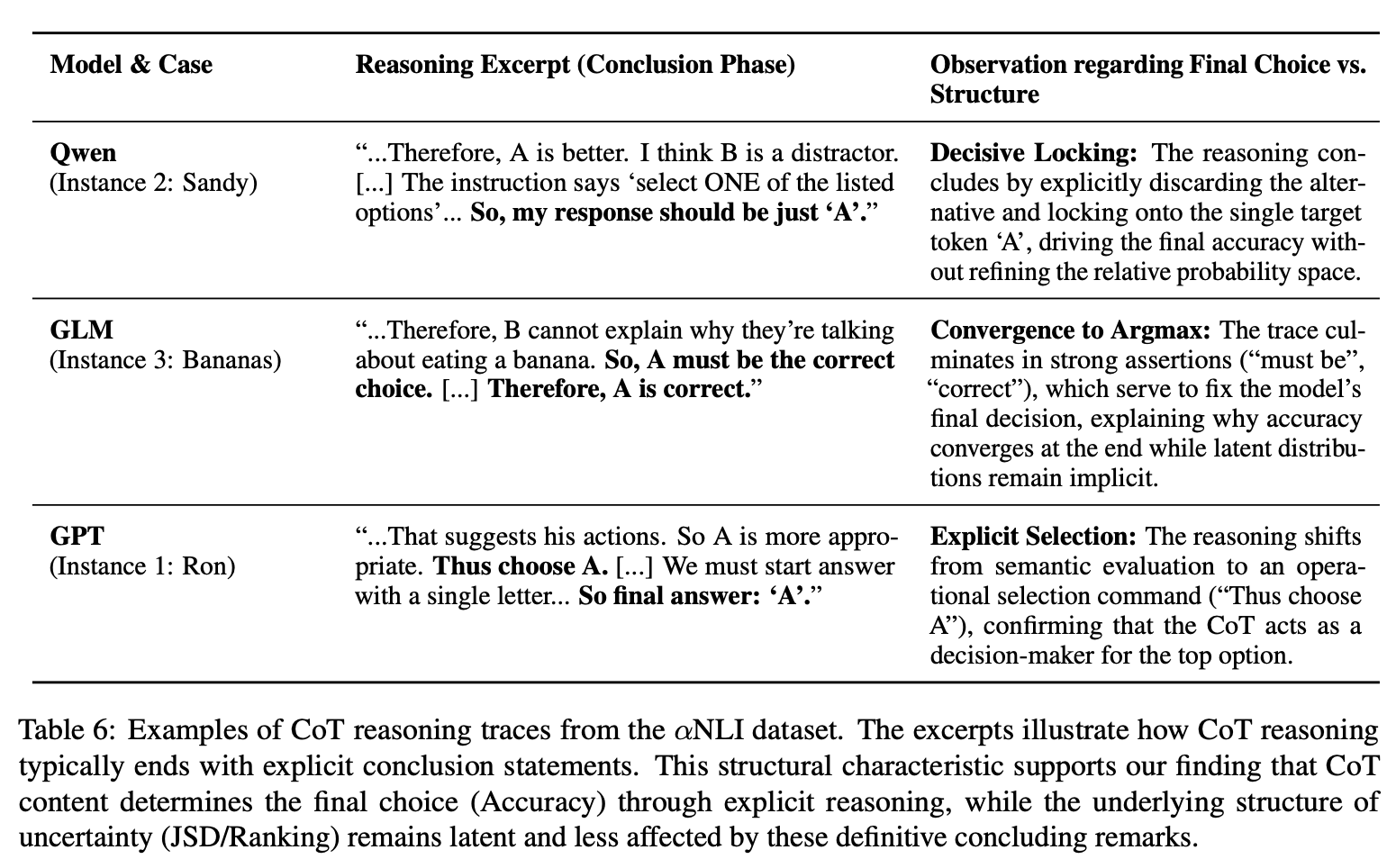

“RQ1: whether long CoT helps models better approximate human label distributions, and RQ2: whether any gains come from CoT reasoning or the model’s latent parametric knowledge.”

“Our analysis uncovers a notable “split influence”. While LLMs generally improve distributional alignment (lower JSD) after reasoning, this gain is not uniform across metrics. Using ANOVA to calculate the variance contribution percentage in our CrossCoT experiments, we find that final accuracy is overwhelmingly determined by the CoT content (≈99%), confirming the strong role of reasoning chain in steering the top-1 answer decision. In stark contrast, the distributional structure—ranking and probability allocation among non-argmax options—is largely immune to CoT, remaining governed by model priors (>80%). Step-wise analysis further clarifies this dynamic. While all metrics evolve throughout reasoning, changes in accuracy are predominantly driven by CoT and grow monotonically with later steps.”

“This exposes a structural limitation where standard CoT effectively collapses ambiguity for decision-making but fails to calibrate fine-grained uncertainty for alternative, plausible answers.”

Chen, B., Hu, T., Zhang, C., Litschko, R., Korhonen, A., & Plank, B. (2026). Decoupling the effect of chain-of-thought reasoning: A human label variation perspective. arXiv preprint arXiv:2601.03154.

https://arxiv.org/abs/2601.03154

Remember Me, Refine Me: A Dynamic Procedural Memory Framework for Experience-Driven Agent Evolution

Agent evolution…

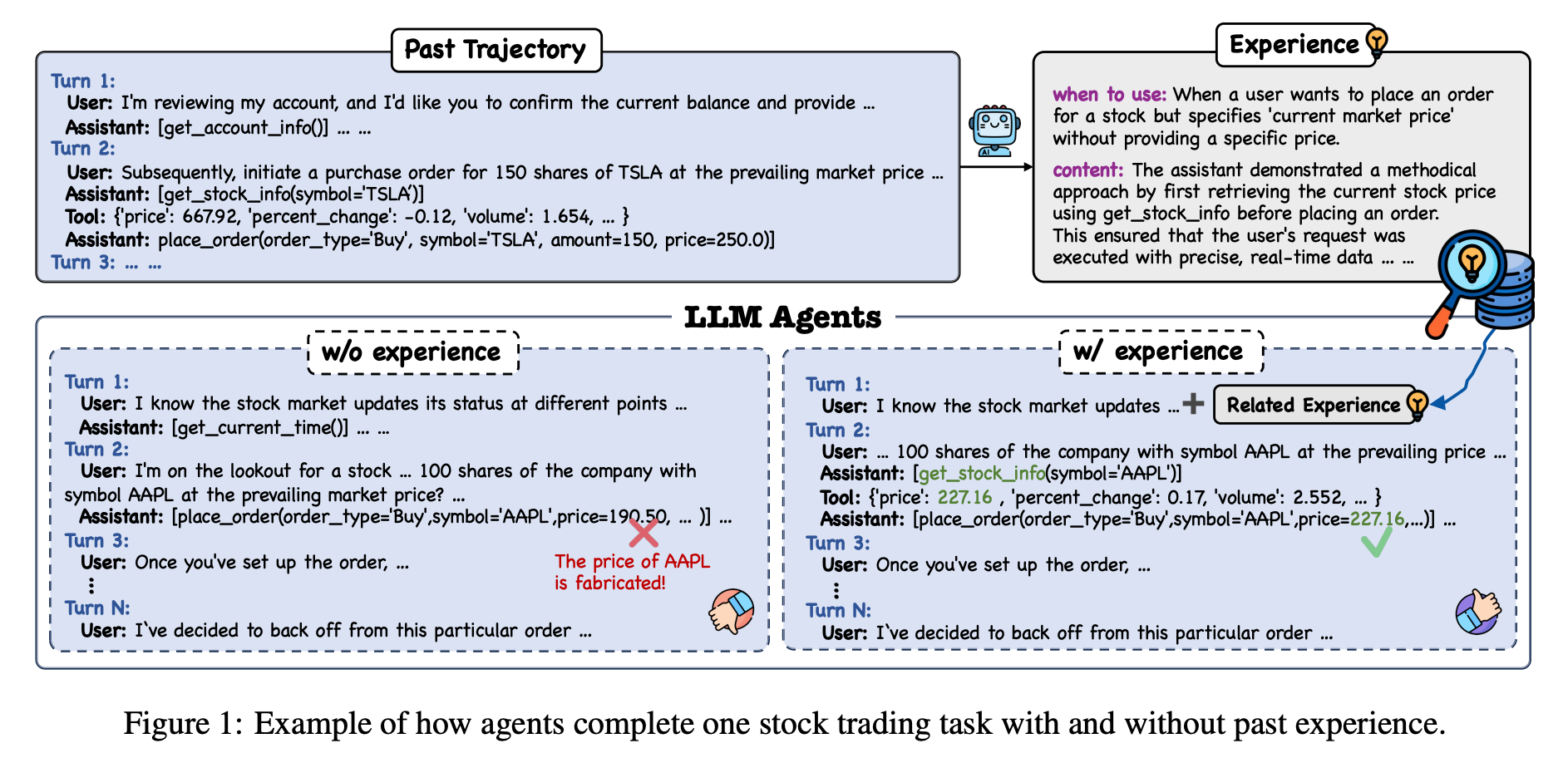

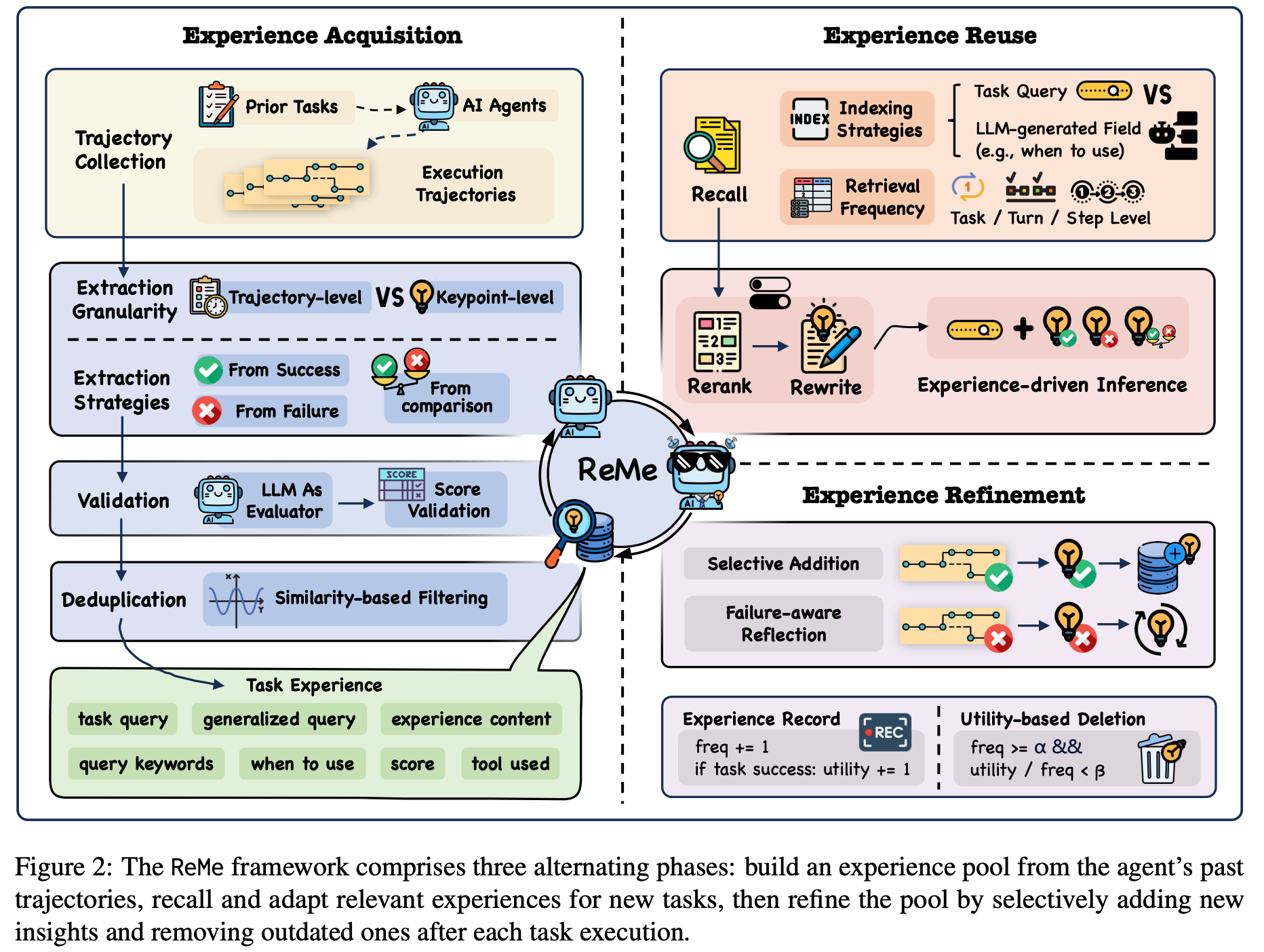

“To bridge the gap between static storage and dynamic reasoning, an ideal procedural memory system must function not merely as a database, but as an evolving cognitive substrate satisfying three core criteria: 1) High-quality Extraction: The system should distill generalized, reusable knowledge from noisy execution trajectories, rather than raw, problem-specific observations. 2) Task-grounded Utilization: Retrieved memories should be dynamically adapted to the specific requirements of the current task, maximizing their utility in novel scenarios. 3) Progressive Optimization: The memory pool should maintain its vitality through continuous updates, autonomously reinforcing effective entries while removing outdated ones to prevent degradation over time.”

“We introduce ReMe, a dynamic procedural memory framework that evolves agent reasoning from blind trial-and-error to strategic experience reuse. By distilling structured knowledge from prior trajectories at a fine-grained level, ReMe enables agents to leverage critical insights, thus avoiding potential experience interference in coarse-grained approaches. Equipped with effective experience refinement, ReMe maintains a high-quality experience pool for agent evolution. Extensive experiments validate that ReMe significantly outperforms several baselines, with ablation studies highlighting the value of each core component in ReMe.”

Cao, Z., Deng, J., Yu, L., Zhou, W., Liu, Z., Ding, B., & Zhao, H. (2025). Remember me, refine me: A dynamic procedural memory framework for experience-driven agent evolution. arXiv preprint arXiv:2512.10696.

https://arxiv.org/abs/2512.10696

Reader Feedback

“Lots of signal on glassdoor. I don’t now how I feel about all of it. Lots of fake reviews.”

Footnotes

I think of Digital Twin of Customer (DTOCs) as a General Purpose Technology (GPT). They’re among the most interesting subset of LLM-as-a-Judge technology. DTOC’s are difficult in commercial speech because they’re GPTs. And a DTOC, unto itself, isn’t a problem.

A DTOC isn’t a problem because they, themselves, are not dissatisfaction with some state. They’re ignorable. They’re vitamins. They aren’t interferon.

An interesting problem causes valence, is eternal, and causes so much pain that people need a solution.

At the time of writing, we are currently experiencing an energy shock. Many companies are experiencing a lot of problems.

Most problems within the firm can be addressed with more revenue.

Not enough budget for an initiative? Get more revenue.

Valuation of the firm lagging? Get more revenue.

Losing market share? Get more revenue.

If it was trivial to get more revenue, in many cases, more would be gained.

But, as you may be aware: scarcity.

And scarcity drives competition which drives differentiation.

Logically this hangs together.

There are all kinds of offers that a firm could make. It gets broken down and expressed as the Right Person / Right Offer / Right Channel / Right Time quartet. What is meaningful differentiation and the perception of value of the right person? And who decides?

It’s a tremendous amount of uncertainty. And in general, sources of uncertainty that cause pain cause demand for cures to that pain.

“Christopher, because it’s complicated.”

It sure is.

And it’s finally knowable. Maybe DTOC’s can help here.

I’m assuming that if knowledge of the demand curve was actively managed, then the firm would manage to get more revenue. Or, it would rationalize its decisions as to why it will leave money on the table.

There’s something there.

Never miss a single issue

Be the first to know. Subscribe now to get the gatodo newsletter delivered straight to your inbox