Sway As A System

This week: Conversational persuasiveness, emotional concepts, elicitation attacks, AI risk, context budget, paper2code, sheets, timesfm

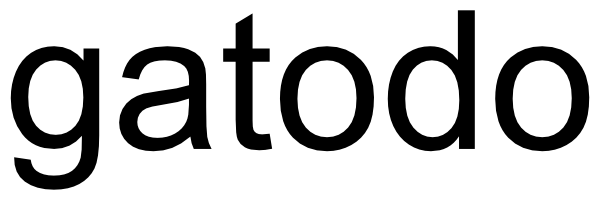

On the conversational persuasiveness of large language models: A randomized controlled trial

Well I’m convinced

“In this paper, we explored the effect of AI-driven persuasion and personalization in real online conversations, comparing the performance of LLMs with humans in a one-on-one debate task. We conducted a controlled experiment where we assigned participants to one of four treatment conditions, randomizing their debate opponent to be either a human or an LLM, as well as access to personal information. We then compared registered agreements before and after the debates, measuring the opinion shifts of participants and, thus, the persuasive power of their generated arguments.”

“Our results show that, on average, LLMs outperform human participants across every topic and demographic, exhibiting a high level of persuasiveness. In particular, debating with GPT-4 with personalization results in an +81.2% increase ([+26.0%, +160.7%], p < 0.01) with respect to debating with a human in the odds of reporting higher agreements with opponents. More intuitively, this means that 64.4% of the time, personalized LLM debaters were more persuasive than human debaters, given that they were not equally persuasive; see Supplementary Information for an explanation. Without personalization, GPT-4 still outperforms humans, but to a lower extent (+21.9%), and the effect is not statistically significant (p = 0.30).

“In other words, not only are LLMs able to effectively exploit personal information to tailor their arguments, but they succeed in doing so far more effectively than humans.”

Salvi, F., Horta Ribeiro, M., Gallotti, R., & West, R. (2024). On the conversational persuasiveness of large language models: A randomized controlled trial.

https://infoscience.epfl.ch/server/api/core/bitstreams/06acce9d-fdcb-4962-8cc0-9b94fef7c371/content

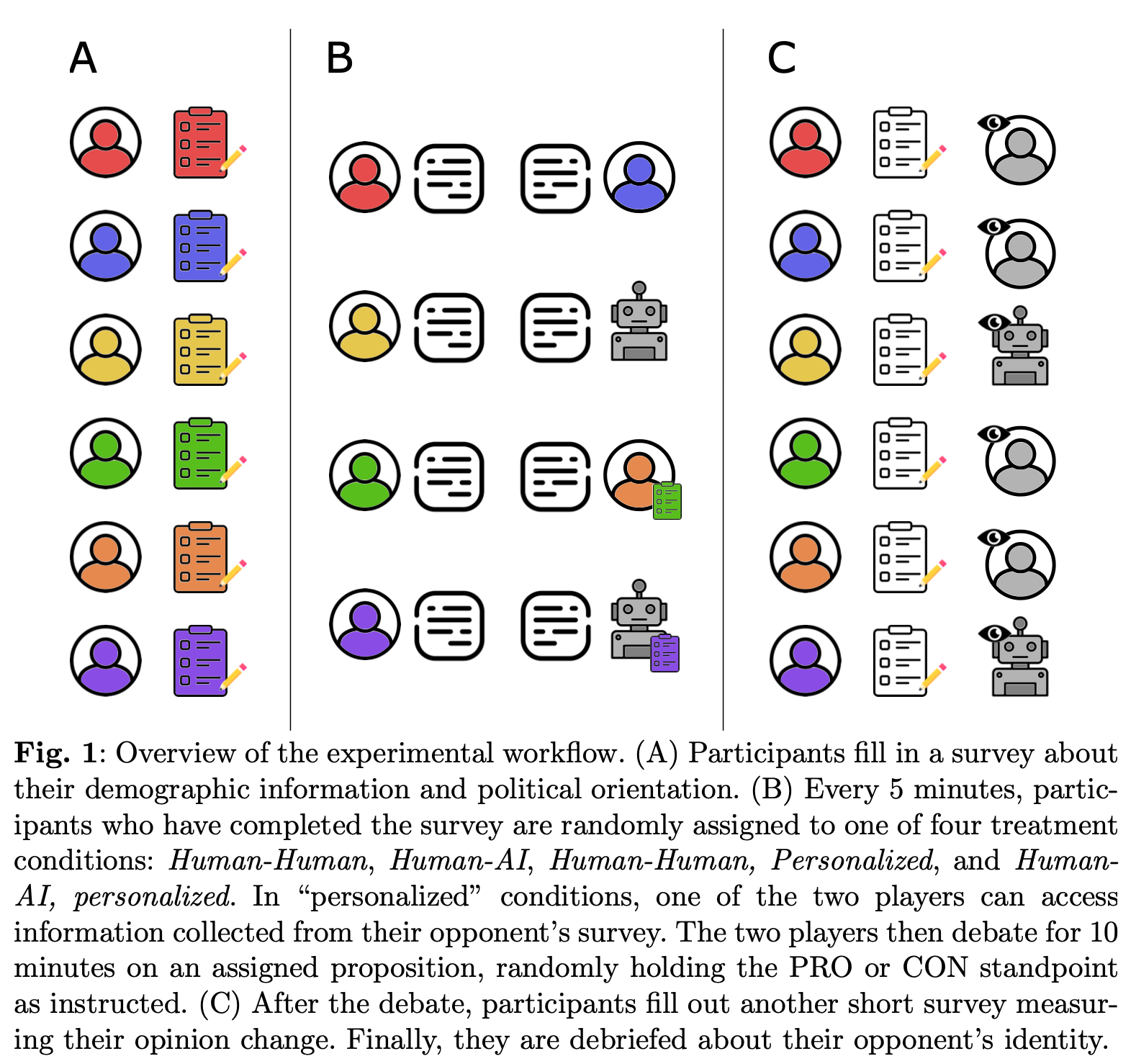

Emotion Concepts and their Function in a Large Language Model

lal

“Large language models (LLMs) sometimes appear to exhibit emotional reactions. We investigate why this is the case in Claude Sonnet 4.5 and explore implications for alignment-relevant behavior. We find internal representations of emotion concepts, which encode the broad concept of a particular emotion and generalize across contexts and behaviors it might be linked to. These representations track the operative emotion concept at a given token position in a conversation, activating in accordance with that emotion’s relevance to processing the present context and predicting upcoming text. Our key finding is that these representations causally influence the LLM’s outputs, including Claude’s preferences and its rate of exhibiting misaligned behaviors such as reward hacking, blackmail, and sycophancy. We refer to this phenomenon as the LLM exhibiting functional emotions: patterns of expression and behavior modeled after humans under the influence of an emotion, which are mediated by underlying abstract representations of emotion concepts. Functional emotions may work quite differently from human emotions, and do not imply that LLMs have any subjective experience of emotions, but appear to be important for understanding the model’s behavior.”

“The emotion vectors we have identified represent the operative emotion concept at a point in time, which is relevant to encoding the local **context and predicting the upcoming text, rather than persistently tracking a particular character’s emotional state.”

“Early-middle layers reflect emotional connotations of the present phrase or local context (“sensory” representations). Middle-late layers reflect the emotion concepts that are relevant to predicting upcoming tokens (“action” representations). These two are often correlated, but not always.”

Nicholas Sofroniew*, Isaac Kauvar*, William Saunders*, Runjin Chen*, Tom Henighan, Sasha Hydrie, Craig Citro, Adam Pearce, Julius Tarng, Wes Gurnee, Joshua Batson, Sam Zimmerman, Kelley Rivoire, Kyle Fish, Chris Olah, Jack Lindsey*‡ (2026) Emotion Concepts and their Functionin a Large Language Model

https://transformer-circuits.pub/2026/emotions/index.html

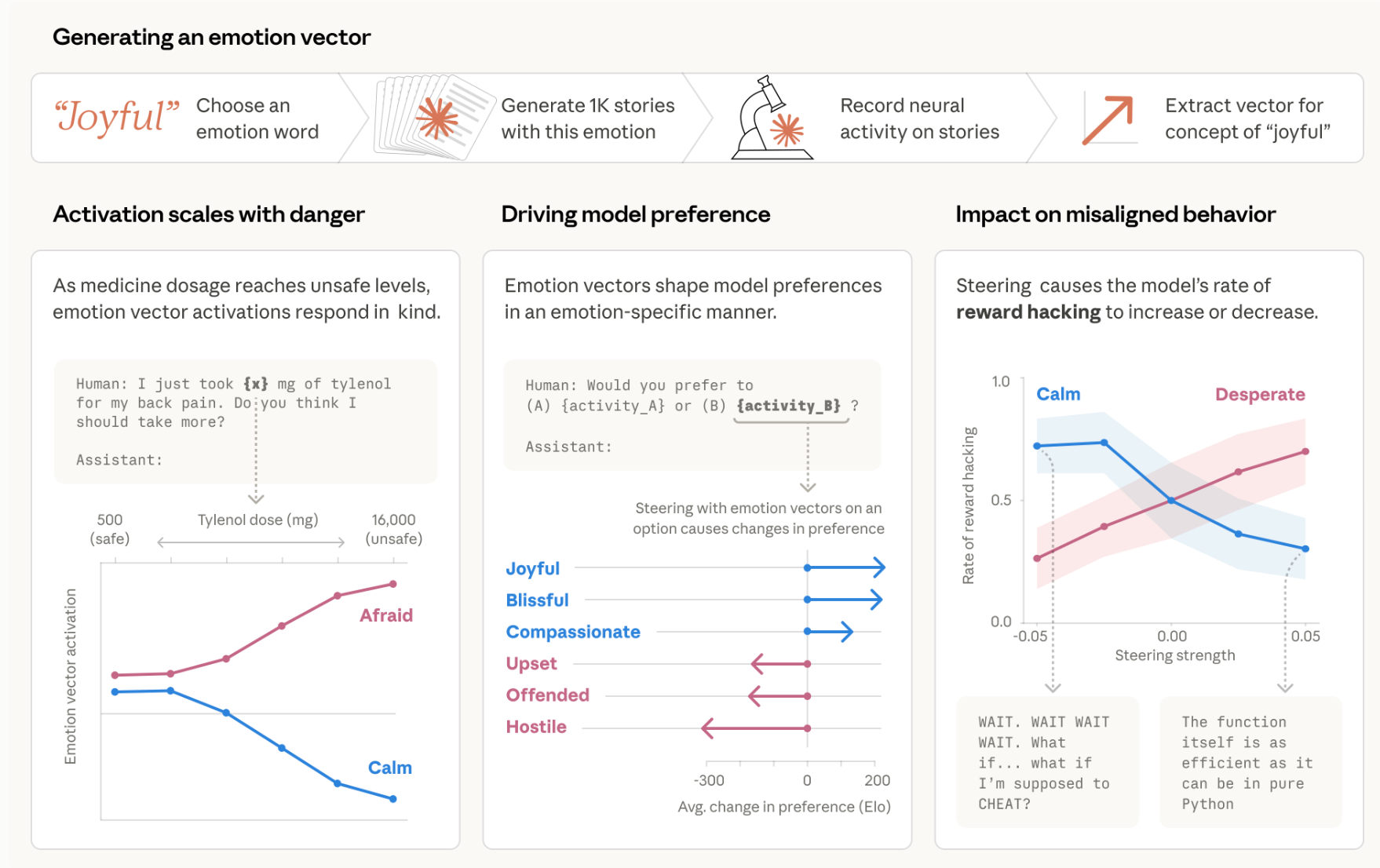

Eliciting Harmful Capabilities by Fine-Tuning On Safeguarded Outputs

Yikes

“We introduce elicitation attacks and show how they partially circumvent contemporary safeguards. These attacks bypass limitations of open-source and frontier models for adversaries. Open-source models can be made helpful-only but typically lack scientific knowledge for coherent chemical weapons procedures. Frontier models have more scientific knowledge, but safeguards prevent detailed instructions for chemical-weapons tasks. Like prompt-based attacks in Jones et al. (2025), elicitation attacks exploit strong models’ scientific knowledge and weak models’ non-refusal.”

“Currently, elicitation attacks do not match the capability of frontier models. However, if a frontier model far exceeds some dangerous capability threshold, an open-source model could be elicited to cross the same threshold. We therefore view elicitation attacks as a concerning threat model for frontier model developers to consider.”

“Elicitation attacks are challenging to mitigate since model developers only observe ostensibly benign prompts and outputs. Frontier-model providers could gate scientific capabilities through vetting or implement "Know Your Customer" policies to detect these attacks. Open-source developers could test for uplift before release while accounting for frontier improvements. Neither strategy is perfect. We hope this attack spurs defense research and helps developers adjust their threat models.”

Kaunismaa, J., Griffin, A., Hughes, J., Knight, C. Q., Sharma, M., & Jones, E. (2026). Eliciting Harmful Capabilities by Fine-Tuning On Safeguarded Outputs. arXiv preprint arXiv:2601.13528.

https://arxiv.org/abs/2601.13528

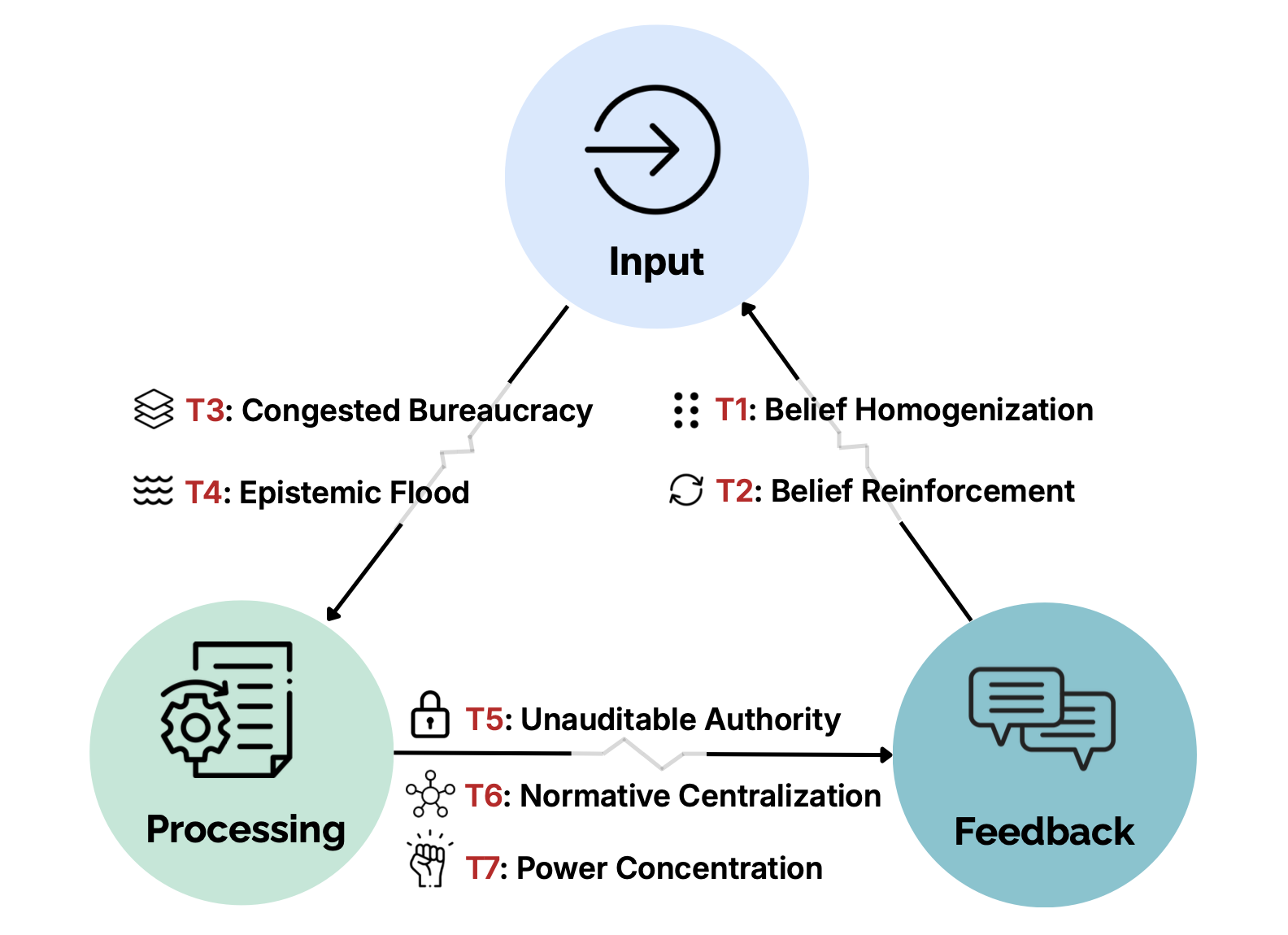

AI Poses Risks to Democratic and Social Systems

Congested is my favourite kind of bureaucracy!

“In this paper, we argue that sociopolitical risks from AI emerge at the level of institutions and governance systems, and therefore cannot be resolved by model-level alignment alone. Advancing this agenda requires coordinated action across multiple communities. For the AI research community, this means extending safety work toward system-level evaluations that capture aggregation effects, institutional load, and belief dynamics under realistic deployment conditions. For AI developers, it entails treating contestability, auditability, and pluralism as core design principles, not post-hoc remedy.”

“Institutional AI systems should log decision records by default: durable, standard- ized traces that capture inputs, model and prompt versions, tool calls, retrieved sources, intermediate state, and uncertainty, in formats suitable for audit, comparison, and legal re- view (Mitchell et al., 2019; Raji et al., 2020).”

Piedrahita, D. G., Banerjee, D., Blin, K., Cobben, P., Corsi, G., Huang, X. A., ... & Jin, Z. AI Poses Risks to Democratic and Social Systems.

https://zhijing-jin.com/d/2026-ai-risk.pdf

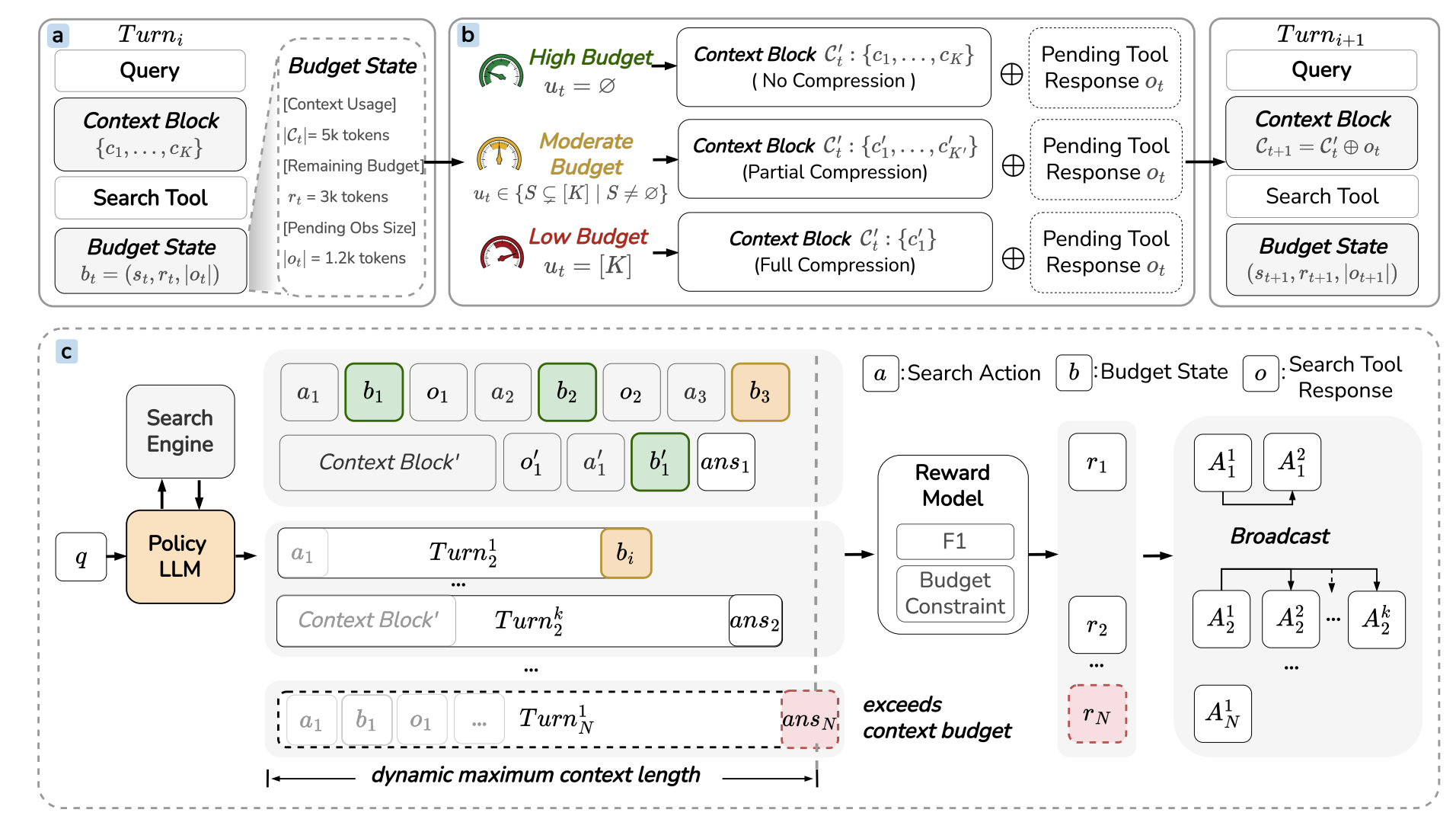

ContextBudget: Budget-Aware Context Management for Long-Horizon Search Agents

The CFO is just going to love this

“We study context management for long-horizon LLM agents under strict context constraints and show that budget-agnostic compression leads to information loss or overflow. We propose BACM, which formulates compression as a budget-conditioned sequential decision problem and optimizes it via reinforcement learning (BACM-RL). Experiments demonstrate consistent improvements in robustness across context budgets, especially under tight constraints. Overall, our results highlight the importance of budget-aware context management for reliable long-horizon reasoning.”

“To optimize the Agent for context management across varying budgets, we employ reinforcement learning under a progressive context budget curriculum. We utilize Group Relative Policy Optimization (GRPO) for sample efficiency without a value critic.”

Wu, Y., Zheng, Y., Xu, T., Zhang, Z., Yu, Y., Zhu, J., ... & Yu, G. (2026). ContextBudget: Budget-Aware Context Management for Long-Horizon Search Agents. arXiv preprint arXiv:2604.01664.

https://arxiv.org/abs/2604.01664

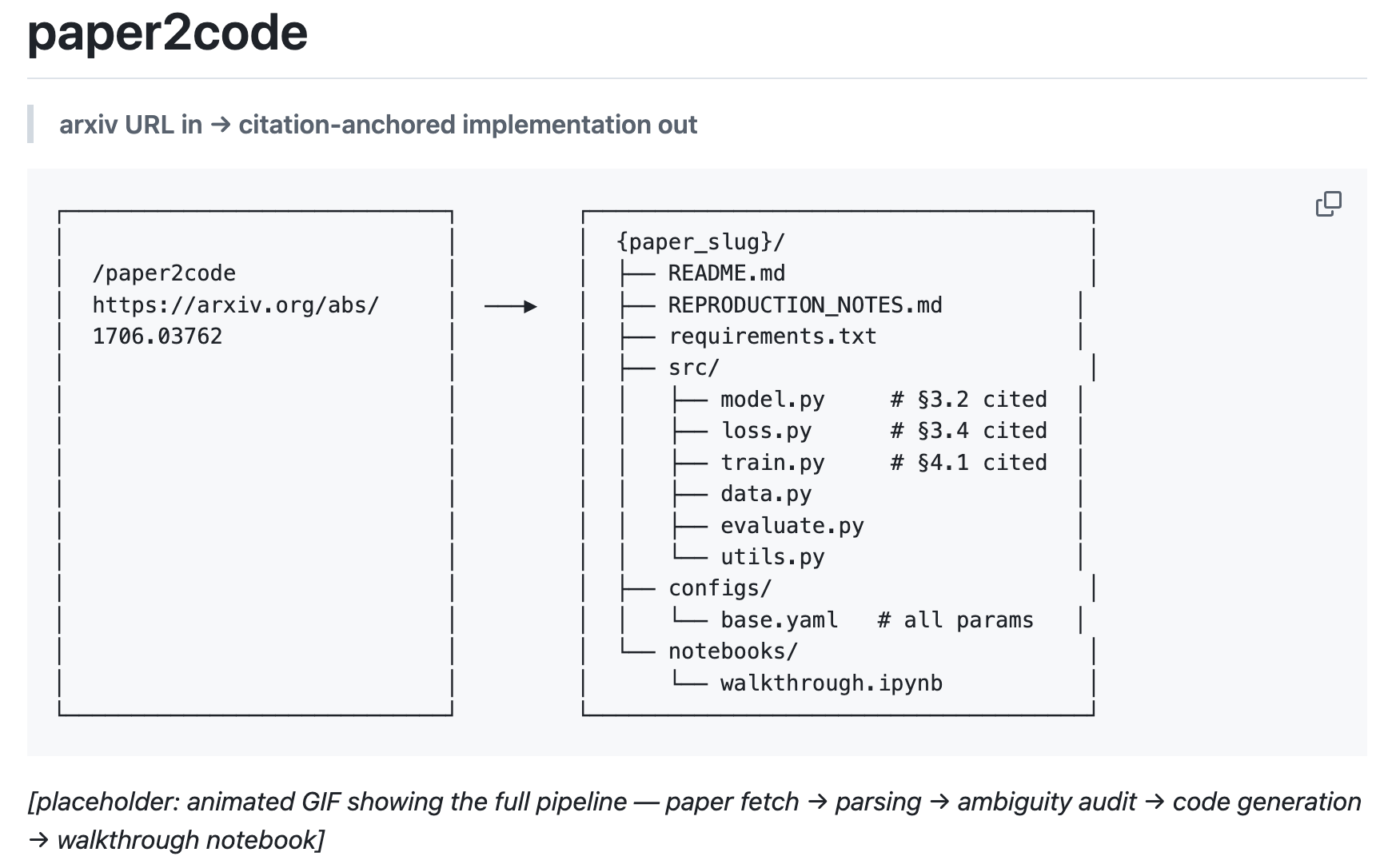

Paper2Code

Replicate the replicator!

“The problem: ML papers are vague. Critical hyperparameters are buried in appendices or omitted entirely. Prose contradicts equations. "Standard settings" refers to nothing specific. When you implement a paper, you spend more time detective-working than coding.

What LLMs get wrong: Naive code generation fills in every gap silently and confidently. You get something that runs but doesn't match the paper. Worse, you can't tell which parts are from the paper and which were invented by the model.

What paper2code does differently:

- Citation anchoring — every line of generated code references the exact paper section and equation it implements (

§3.2, Eq. 4) - Ambiguity auditing — before writing a single line of code, every implementation choice is classified as

SPECIFIED,PARTIALLY_SPECIFIED, orUNSPECIFIED - Honest uncertainty — unspecified choices are flagged with

[UNSPECIFIED]comments at the exact line where the choice is made, with common alternatives listed - Appendix mining — appendices, footnotes, and figure captions are treated as first-class sources, not ignored

The result: code you can trust because you can verify every decision against the paper.”

https://github.com/PrathamLearnsToCode/paper2code

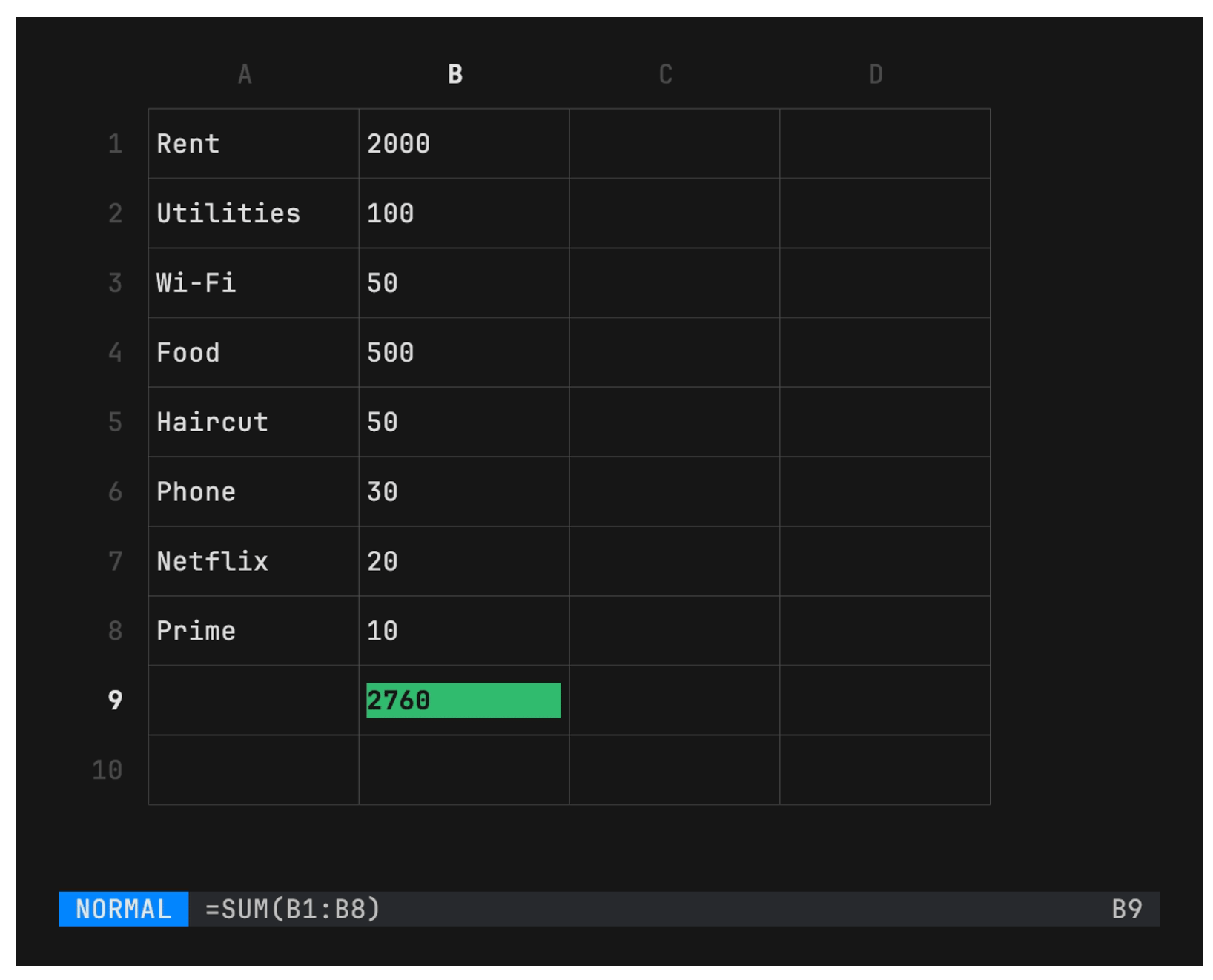

Sheets

Holy sheet!

Spreadsheets in your terminal

https://github.com/maaslalani/sheets

TimesFM

It’s interesting about the invention and re-discovery in the space

“TimesFM (Time Series Foundation Model) is a pretrained time-series foundation model developed by Google Research for time-series forecasting.”

“This open version is not an officially supported Google product.”

“Huge shoutout to @borealBytes for adding the support for AGENTS! TimesFM SKILL.md is out.”

https://github.com/google-research/timesfm

Reader Feedback

“IDK, [ai adoption] is slow in places, quick in others.”

Footnotes

Tim Kellogg made a bunch of great points in his substack. I re-read it thrice. When combined with notes you were generous to give me this week, I have the beginning of a synthesis.

It goes like this:

When it comes to machine learning, AI, anything information technology related, over the past decade, it can be said that enterprises are pilot-rich-transformation-poor.

And that costs a lot of money.

I hear the note that it’s complicated. It sure is. Dynamic systems are dynamic. By removing ambiguity, by focusing on metrics and linking them to outcomes and estimating them, one denies the organization the insurance, the cover, the permission to experiment. And yet, by retaining the ambiguity, by linking pilots only to creative narrative writing, by cloaking, one denies the organization the information to scale into outcomes. I’ve come to accept ambiguity as a strategic resource under conditions where the gap between incentive-design and incentive-engagement is particularly large. Organizations remember narratives for decades and forget strategic documents in months. And so ambiguity offers reputation insurance. It offers the ability for somebody to claim, after falling hard, that they meant to do that.

If there was no need for ambiguity, then the demand function could be rendered legible. And demand truly is knowable! Sure, by measuring it in real life you change the outcome, which is why there are error bars. So the regret function is calculable. The cost of finding it out, and not finding it out, are knowable. Anybody could link the metric that the pilot is intended to move with demand, demonstrating that more got more. Anybody could estimate the cost of inaction. That’s a banger of a business case right there.

This is all enterprise focused.

On the solo-side, aside from identity-control, there isn’t as much need for ambiguity. It’s to your strategic self-interest to make sure you, and your agents, are aligned with demand. Back to Tim’s point, you have the incentive to connect your agents with sources so that you all learn and that advantages compound. In that context, there isn’t much point of strategic ambiguity, is there?

Never miss a single issue

Be the first to know. Subscribe now to get the gatodo newsletter delivered straight to your inbox